Persistent Memory (PMEM) is an exciting storage tier with much lower latency than SSDs. LINBIT® has optimized DRBD® for when its metadata is stored on PMEM/NVDIMM.

This article relates to both:

- Traditional NVDIMM-N: Some DRAM is accompanied by NAND-flash. On power failure, a backup power source (supercap, battery) is used to save the contents of the DRAM to the flash storage. When the main power is restored, the contents of the DRAM are restored. These components have exactly the same timing characteristics as DRAM and are available in sizes of 8GB, 16GB or 32GB per DIMM.

- Intel’s new Optane DC Persistent Memory: These DIMMs are built using a new technology called 3D XPoint. It is inherently non-volatile and has only slightly higher access times than pure DRAM. It comes in much higher capacities than traditional NVDIMMs: 128GB, 256GB and 512GB.

DRBD requires metadata to keep track of which blocks are in sync with its peers. This consists of 2 main parts. One part is the bitmap which keeps track of exactly which 4KiB blocks may be out of sync. It is used when a peer is disconnected to minimize the amount of data that must be synced when the nodes reconnect. The other part is the activity log. This keeps track of which data regions have ongoing I/O or had I/O activity recently. It is used after a crash to ensure that the nodes are fully in sync. It consists of 4MiB extents which, by default, cover about 5GiB of the volume.

Since the DRBD metadata is small and frequently accessed, it is a perfect candidate to be placed on PMEM. A single 8GiB NVDIMM can store enough metadata for 100 volumes of 1TiB each, allowing for replication between 3 nodes.

PMEM outperforms

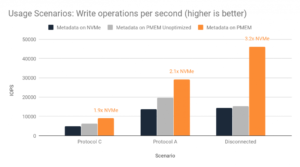

DRBD 9 has been optimized to access metadata on PMEM directly using memory operations. This approach is extremely efficient and leads to significant performance gains. The improvement is most dramatic when the metadata is most often updated. This occurs when writes are performed serially. That is, the I/O depth is 1. When this is the case, scattered I/O forces the activity log to be updated on every write. Here we compare the performance between metadata on a separate NVMe device, and metadata on PMEM with and without the optimizations.

As can be seen, placing the DRBD metadata on a PMEM device results in a massive performance boost for this kind of workload.

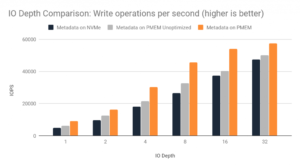

Impact with concurrent I/O

When I/O is submitted concurrently, DRBD does not have to access the metadata as often. Hence we do not expect the performance impact to be quite as dramatic. Nevertheless, there is still a significant performance boost, as can be seen.

If you have a workload with very high I/O depth, you may wish to trial DRBD 10, which performs especially well in such a situation. See https://linbit.com/en/drbd10-vs-drbd9-performance/.

Technical details

The above tests were carried out on a pair of 16 core servers equipped with NVMe storage and a direct ethernet connection. Each server had an 8GiB DDR4 NVDIMM from Viking installed. DRBD 9.0.17 was used to perform the tests without the PMEM optimizations and DRBD 9.0.20 for the remainder. I/O was generated using the fio tool with the following parameters:

fio --name=test --rw=randwrite --direct=1 --numjobs=8

--ioengine=libaio --iodepth=$IODEPTH --bs=4k --time_based=1

--runtime=60 --size=48G --filename=/dev/drbd500

If you got technical questions, don’t hesitate to subscribe to our email list