LINSTOR ユーザーズガイド

はじめにお読みください

このガイドは、software-defined storage (SDS) ソリューションである LINSTOR® のリファレンスガイドおよびハンドブックとして使用することを目的としています。

| このガイドは全体を通して、あなたがLINSTORと関連ツールの最新版を使っていると仮定します。 |

このガイドは次のように構成されています。

-

LINSTORの紹介 は、LINSTORの基本的な概要であり、LINSTORの概念や用語の説明を提供しています。

-

基本管理タスクとシステム設定 ではLINSTORの基本的な機能を扱い、一般的な管理作業を使用するための方法を提供します。この章ではそれ以外に、LINSTORの基本設定を配備するためのステップバイステップのガイドとして使用できます。

-

LINSTOR 応用タスク では、さまざまな高度で重要な LINSTOR タスクや構成を示しており、より複雑な方法で LINSTOR を使用できます。

-

GUIを使用したLINSTORの管理 は、LINBIT®の顧客に提供されているLINSTORクラスターを管理するためのグラフィカルクライアントアプローチに関する情報です。

-

LINSTOR Integrations には、 Kubernetes 、 Proxmox VE 、 OpenNebula 、 Docker 、 OpenStack などのさまざまなプラットフォームやテクノロジーと組み合わせて、LINSTORベースのストレージソリューションを実装する方法について取り扱った章があります。これらは LINSTOR API を使用しています。

LINSTORについて

1. LINSTORの紹介

LINSTOR®を効果的に使用するためには、この「LINSTORの概要」章がソフトウェアの概要を提供し、その動作方法やストレージの展開方法を説明し、重要な概念や用語を紹介して説明することで、理解を助けます。

1.1. LINSTORの概要

LINBIT社が開発したオープンソースの構成管理システム、LINSTORは、Linuxシステム上のストレージを管理します。このシステムは、ノードのクラスター上でLVM論理ボリュームやZFS ZVOLを管理し、異なるノード間での複製にDRBDを使用してユーザーやアプリケーションにブロックストレージデバイスを提供します。スナップショット、暗号化、HDDバックアップデータのSSDへのキャッシングなど、いくつかの機能があります。

1.1.1. LINSTOR はどこで使用されているのか

LINSTORはもともとDRBDリソースを管理するために開発されました。まだLINSTORを使用してDRBDを管理することができますが、LINSTORは進化し、より便利になったり、それらのスタック内で可能な範囲を超えてより簡単に永続ストレージを提供するために、しばしば上位のソフトウェアスタックと統合されています。

LINSTORは単体で使用することもできますし、Kubernetes、OpenShift、OpenNebula、OpenStack、Proxmox VEなどの他のプラットフォームと統合することも可能です。LINSTORは、オンプレミスのベアメタル環境で実行することもできますし、仮想マシン(VM)、コンテナ、クラウド、ハイブリッド環境でも利用することができます。

1.1.2. LINSTORがサポートするストレージと関連技術

LINSTORは、次のストレージプロバイダーおよび関連技術と連携して動作することができます:

-

LVM and LVM thin volumes

-

ZFS and ZFS thin volumes

-

File and FileThin (loop devices)

-

Diskless

-

SPDK (remote)

-

Microsoft Windows Storage Spacesおよびthin Storage Spaces

-

EBS (target and initiator)

-

Device mapper cache (

dm-cache) とwritecache (dm-writecache) -

bcache

-

LUKS

-

DRBD

LINSTORを使用することで、これらのテクノロジーを単独で扱うか、またはさまざまな有益な組み合わせで使用することができます。

1.2. LINSTORの動作原理

機能するLINSTORセットアップには、linstor-controller.service というsystemdサービスとして実行されるアクティブなコントローラーノードが1つ必要です。これはLINSTORコントロールプレーンであり、LINSTORコントローラーノードがLINSTORサテライトノードと通信する場所です。

セットアップには、LINSTORサテライトソフトウェアをsystemdサービスとして実行する1つ以上のサテライトノードが必要です。LINSTORサテライトサービスは、ノード上でストレージや関連アクションを容易にします。たとえば、ユーザーやアプリケーションにデータストレージを提供するためにストレージボリュームを作成することができます。ただし、サテライトノードはクラスタに物理ストレージを提供する必要はありません。例えば、DRBDクォーラムの目的でLINSTORクラスタに参加する「ディスクレス」サテライトノードを持つことができます。

| ノードがLINSTORコントローラーとサテライトサービスの両方を実行し、”Combined”の役割を果たすことも可能です。 |

ストレージ技術は、例えばDRBDレプリケーションなどとして実装されたLINSTORサテライトノード上で考えることができます。LINSTORでは、コントロールとデータ面が分離されており、独立して機能することができます。つまり、例えばLINSTORコントローラーノードやLINSTORコントローラーソフトウェアを更新する際も、LINSTORサテライトノードはユーザーやアプリケーションに中断することなくストレージを提供し(およびDRBDを使用してレプリケートする場合は)、機能を維持することができます。

このガイドでは、便宜上、LINSTORセットアップはしばしば「LINSTORクラスター」と呼ばれますが、有効なLINSTORセットアップは、Kubernetesなどの他のプラットフォーム内で統合として存在することがあります。

ユーザーは、CLIベースのクライアントやグラフィカルユーザーインタフェース(GUI)を使用してLINSTORとやり取りすることができます。これらのインタフェースは、LINSTOR REST APIを利用しています。また、LINSTORは、このAPIを使用するプラグインやドライバを利用して、他のプラットフォームやアプリケーションと統合することができます。

LINSTORコントローラーとREST API間の通信はTCP/IPを介して行われ、SSL/TLSを使用してセキュリティを確保することができます。

LINSTORが物理ストレージとやり取りするために使用する南行きのドライバーは、LVM、thinLVM、およびZFSです。

1.3. インストール可能なコンポーネント

LINSTORのセットアップには、インストール可能なコンポーネントが3つあります:

-

LINSTOR controller

-

LINSTOR satellite

-

LINSTOR user interfaces (LINSTOR client and LINBIT SDS GUI)

これらのインストール可能なコンポーネントは、コンパイルできるソースコードであるか、または事前にビルドされたパッケージであり、ソフトウェアをインストールして実行するために使用できます。

1.3.1. LINSTOR controller

linstor-controller サービスは、クラスタ全体の構成情報を保持するデータベースに依存しています。LINSTORコントローラーソフトウェアを実行しているノードやコンテナは、リソース配置、リソース構成、およびクラスタ全体の状態を必要とするすべての運用プロセスのオーケストレーションを担当しています。

LINSTORでは複数のコントローラを使用することができます。たとえば、 高可用性のLINSTORクラスタを設定する ときには、複数のコントローラを利用することができます。ただし、アクティブなコントローラは1つだけです。

前述のように、LINSTORコントローラーは、管理するデータプレーンとは別のプレーンで動作します。コントローラーサービスを停止し、コントローラーノードを更新または再起動しても、LINSTORサテライトノードにホストされているデータにアクセスできます。LINSTORサテライトノード上のデータには引き続きアクセスし、提供することができますが、実行中のコントローラーノードがないと、サテライトノードでのLINSTORの状態や管理タスクを実行することはできません。

1.3.2. LINSTOR satellite

linstor-satellite サービスは、LINSTORがローカルストレージを利用するか、サービスにストレージを提供する各ノードで実行されます。このサービスはステートレスであり、LINSTORコントローラーサービスを実行しているノードまたはコンテナから必要なすべての情報を受け取ります。 LINSTORサテライトサービスは、lvcreate や`drbdadm` などのプログラムを実行します。それは、LINSTORコントローラーノードまたはコンテナから受け取った命令を実行するノード上またはコンテナ内のエージェントのように機能します。

1.3.3. LINSTOR ユーザーインターフェース

LINSTORとのインターフェースを行う必要がある場合、そのアクティブなLINSTORコントローラーに指示を送信するには、LINSTORクライアント、またはLINBIT SDS GUIのどちらかのユーザーインターフェース(UI)を使用できます。

これらのUIは、両方ともLINSTORの REST API に依存しています。

LINSTOR クライアント

「linstor」というLINSTORクライアントは、アクティブなLINSTORコントローラーノードにコマンドを送信するためのコマンドラインユーティリティです。これらのコマンドは、クラスタ内のストレージリソースを作成または変更するようなアクション指向のコマンドである場合もありますし、LINSTORクラスタの現在の状態に関する情報を収集するためのステータスコマンドである場合もあります。

LINSTORクライアントは、有効なコマンドと引数を続けて入力することで使用することもできますし、クライアントの対話モードでは、単に linstor と入力することで使用することができます。

LINSTORクライアントの使用方法に関する詳細情報は、このユーザーガイドのLINSTORクライアントの使用 セクションで見つけることができます。

# linstor Use "help <command>" to get help for a specific command. Available commands: - advise (adv) - backup (b) - controller (c) - drbd-proxy (proxy) - encryption (e) - error-reports (err) - file (f) - help - interactive - key-value-store (kv) - list-commands (commands, list) - node (n) - node-connection (nc) - physical-storage (ps) - remote - resource (r) - resource-connection (rc) - resource-definition (rd) - resource-group (rg) - schedule (sched) - snapshot (s) - sos-report (sos) - space-reporting (spr) - storage-pool (sp) - volume (v) - volume-definition (vd) - volume-group (vg) LINSTOR ==>

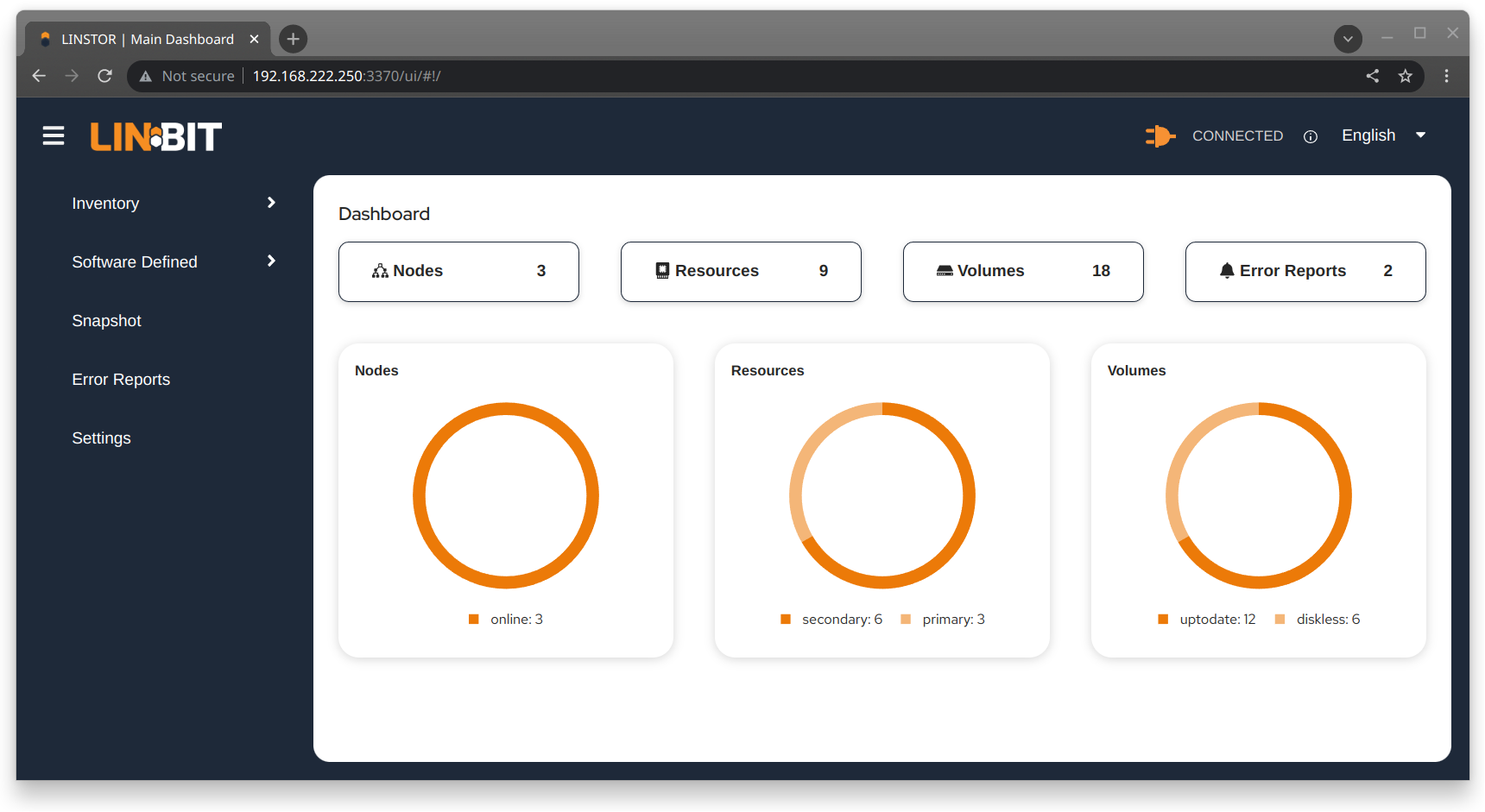

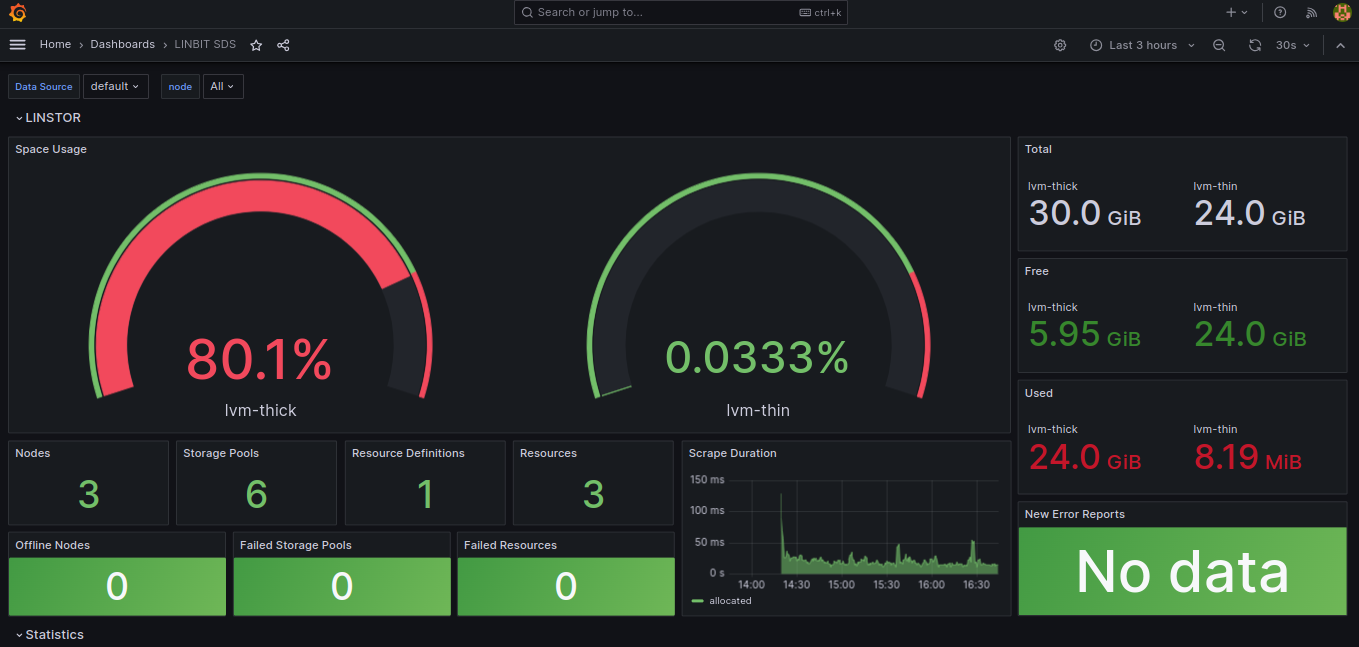

LINBIT SDS グラフィカルユーザーインターフェース

LINBIT SDSのグラフィカルユーザーインターフェース(GUI)は、LINSTORと連携して使用できるWebベースのGUIです。これは、LINSTORクラスターに関する概要情報を取得したり、クラスター内のLINSTORオブジェクトを追加、変更、削除したりする便利な方法となります。例えば、ノードを追加したり、リソースを追加・削除したり、その他のタスクを実行したりすることができます。

このユーザーガイドでは、GUIインターフェースの使用方法については、 LINBIT SDS GUIチャプター で詳細をご覧いただけます。

1.4. 内部コンポーネント

LINSTORの内部コンポーネントは、LINSTORがどのように機能し、どのように使用するかを表現するために使用されるソフトウェアコードの抽象化です。内部コンポーネントの例として、LINSTORオブジェクトであるリソースやストレージプールが挙げられます。これらは抽象化されていますが、LINSTORクライアントまたはGUIを使用してストレージを展開し管理する際に、非常に現実的な方法でそれらとやり取りします。

このセクションでは、LINSTORの動作原理や使用方法を理解するために必要な基本概念や用語を紹介し、説明しています。

1.4.1. LINSTOR オブジェクト

LINSTORは、ソフトウェア定義ストレージ(SDS)に対してオブジェクト指向のアプローチを取っています。LINSTORオブジェクトは、ユーザーやアプリケーションが利用するか、または拡張するためにLINSTORが提供する最終成果物です。

以下に、最も一般的に使用されるLINSTORのオブジェクトが説明されており、その後に全リストが続きます。

リソース

リソースとは、アプリケーションやエンドユーザーに提示される消費可能なストレージを表すLINSTORオブジェクトのことです。LINSTORが工場であるならば、リソースはそれが生産する製品です。多くの場合、リソースはDRBD複製ブロックデバイスですが、必ずしもそうである必要はありません。例えば、DRBDのクォーラム要件を満たすためにディスクレスなリソースであったり、NVMe-oFやEBSイニシエータである場合もあります。

リソースは以下の属性を持っています:

-

リソースが存在するノードの名前

-

そのリソースが属するリソース定義

-

リソースの構成プロパティ

ボリューム

ボリュームは、物理ストレージに最も近いLINSTORの内部コンポーネントであり、リソースのサブセットです。1つのリソースに複数のボリュームを持つことができます。例えば、MySQLクラスターの中で、データベースをトランザクションログよりも遅いストレージに保存したい場合があります。LINSTORを使用してこれを実現するためには、より高速なトランザクションログストレージメディア用の1つのボリュームと、より遅いデータベースストレージメディア用の別のボリュームを持ち、どちらも単一の「MySQL」リソースの下に配置します。1つのリソースの下に複数のボリュームを保持することで、実質的にコンシステンシーグループを作成しています。

ボリュームに指定した属性は、LINSTORオブジェクト階層の上位で同じ属性が指定されている場合よりも優先されます。これは、再度述べるように、ボリュームが物理ストレージに最も近い内部的なLINSTORオブジェクトであるためです。

ノード

Nodeとは、LINSTORクラスタに参加するサーバーまたはコンテナのことです。Nodeオブジェクトには、以下の属性があります。

-

名前

-

IP アドレス

-

TCP ポーと

-

ノードタイプ (controller, satellite, combined, auxiliary)

-

コミュニケーション型 (plain or SSL/TLS)

-

ネットワークインターフェース型

-

ネットワークインターフェース名

ストレージプール

ストレージプールは、他のLINSTORオブジェクト(LINSTORリソース、リソース定義、またはリソースグループ)に割り当てることができるストレージを識別し、LINSTORボリュームによって利用されることができます。

ストレージプール定義

-

クラスターノード上のストレージプールに使用するストレージバックエンドドライバーは、例えば、LVM、スリムプロビジョニングされたLVM、ZFS、その他です。

-

ストレージプールが存在するノード

-

ストレージプール名

-

ストレージプールの構成プロパティ

-

ストレージプールのバックエンドドライバー(LVM、ZFS、その他)に渡す追加パラメータ

LINSTORオブジェクトのリスト

LINSTORには次のコアオブジェクトがあります:

EbsRemote |

ResourceConnection |

SnapshotVolumeDefinition |

ExternalFile |

ResourceDefinition |

StorPool |

KeyValueStore |

ResourceGroup |

StorPoolDefinition |

LinstorRemote |

S3Remote |

Volume |

NetInterface |

Schedule |

VolumeConnection |

Node |

Snapshot |

VolumeDefinition |

NodeConnection |

SnapshotDefinition |

VolumeGroup |

Resource |

SnapshotVolume |

1.4.2. 定義とグループオブジェクト

定義とグループもLINSTORオブジェクトですが、特別な種類です。定義とグループのオブジェクトは、プロファイルやテンプレートと考えることができます。これらのテンプレートオブジェクトは、親オブジェクトの属性を継承する子オブジェクトを作成するために使用されます。また、子オブジェクトに影響を与える可能性のある属性を持つこともありますが、それは子オブジェクト自体の属性ではありません。これらの属性には、たとえば、DRBDレプリケーションに使用するTCPポートや、DRBDリソースが使用するボリューム番号などが含まれる可能性があります。

Definitions

Definitions はオブジェクトの属性を定義します。定義から作成されたオブジェクトは、定義された構成属性を継承します。子オブジェクトを作成する前に、その関連する定義を作成する必要があります。例えば、対応するリソースを作成する前に、リソースの定義を作成する必要があります。

LINSTORクライアントを使用して直接作成できる2つのLINSTOR定義オブジェクトがあります。リソースの定義とボリュームの定義です。

- リソースの定義

-

リソースの定義では、リソースの以下の属性を定義できます。

-

リソース定義が所属するリソースグループ

-

リソースの名前(暗黙的に、リソース定義の名前によって)

-

リソースの接続に使用するTCPポートは、たとえば、DRBDがデータをレプリケートしている場合に使用されます。

-

リソースの保存層、ピアスロット、外部名などの他の属性。

-

- ボリューム定義

-

ボリューム定義は次のように定義することができます:

-

ストレージ容量のサイズ

-

保存ボリュームのボリューム番号(リソースに複数のボリュームがある場合があるため)

-

ボリュームのメタデータのプロパティ

-

DRBDレプリケーションストレージに関連付けられたボリュームに使用するマイナーナンバー

-

これらの定義に加えて、LINSTORにはいくつかの間接的な定義があります。それは、ストレージプール定義、スナップショット定義、およびスナップショットボリューム定義です。LINSTORは、対応するオブジェクトを作成する際にこれらを自動的に作成します。

グループ

グループは、プロファイルやテンプレートのような役割を持つため、定義と似ています。定義はLINSTORオブジェクトのインスタンスに適用されるのに対して、グループはオブジェクトの定義に適用されます。名前が示す通り、1つのグループは複数のオブジェクト定義に適用でき、1つの定義が複数のオブジェクトインスタンスに適用できるという点で類似しています。たとえば、頻繁に必要なストレージユースケースのためのリソース属性を定義するリソースグループを持つことができます。その後、そのリソースグループを使用して、その属性を持つ複数のリソースを簡単に生成(作成)できます。リソースを作成するたびに属性を指定する必要がなくなります。

- リソースグループ

-

リソースグループは、リソース定義の親オブジェクトであり、リソースグループ上で行われたすべてのプロパティ変更は、そのリソース定義の子供たちに引き継がれます。リソースグループ上で行われたプロパティの変更は、対応する

.resリソースファイルに反映されることになります。ただし、親で行われたプロパティ変更は子オブジェクト(リソース定義またはリソースLINSTORオブジェクト)にコピーされないため、子オブジェクト自体はそのプロパティを持ちません。変更はオブジェクトの子供に影響しますが、プロパティ自体は親に残ります。リソースグループには、自動配置ルールの設定も保存され、保存されたルールに基づいてリソース定義を生成することもできます。リソースグループは、そのリソース定義の子オブジェクトの特性を定義します。リソースグループから生成されるリソース、またはリソースグループに属するリソース定義から作成されるリソースは、リソースグループの「孫」オブジェクトであり、リソース定義の「子」オブジェクトとなります。リソース定義を作成するたびに、指定しない限り、デフォルトのLINSTORリソースグループである

DfltRscGrpのメンバーとなります。リソースグループへの変更は、リソースグループから作成されたすべてのリソースやリソース定義に遡及して適用されます。ただし、同じ特性が子オブジェクト(例: リソース定義やリソース)に設定されている場合は、その特性は適用されません。

これにより、リソースグループを使用することは、多数のストレージリソースを効率的に管理するための強力なツールとなります。個々のリソースを作成または修正する代わりに、リソースグループの親を構成するだけで、すべての子リソースオブジェクトに構成が適用されます。

- ボリュームグループ

-

同様に、ボリュームグループはボリュームの定義のためのプロファイルやテンプレートのようなものです。ボリュームグループは常に特定のリソースグループを参照しなければなりません。さらに、ボリュームグループはボリューム番号と「総」ボリュームサイズを定義することができます。

1.5. LINSTORのオブジェクト階層

この章の前のサブセクションでほのめかされた通り、LINSTORオブジェクトには階層概念が存在します。文脈によっては、これを親子関係として説明することもできますし、上位と下位の関係として説明することもできます。ここで下位とは、物理ストレージレイヤーに近いことを意味します[注: 物理ストレージは、例えば「ディスクレス」ノードのように、特定のノードに存在しない場合があります。ここでの「物理ストレージ」レイヤーは、LINSTORにおけるオブジェクトの階層構造を概念化するための架空のものです。]。

子要素は、親要素に定義された属性を継承しますが、子要素で既に定義されている場合を除きます。同様に、下位要素は、上位要素に設定された属性を受け取りますが、下位要素で既に定義されている場合を除きます。

1.5.1. LINSTORにおけるオブジェクト階層の一般的なルール

次に、LINSTORにおけるオブジェクト階層の一般的なルールがいくつかあります:

-

LINSTORオブジェクトは、そのオブジェクトに設定できる属性のみを受け取るか継承できます。

-

上位のオブジェクトに設定された属性は、下位のオブジェクトにも引き継がれます。

-

下位オブジェクトに設定された属性は、上位オブジェクトに設定された同じ属性より優先されます。

-

子オブジェクトは、親オブジェクトに設定されている属性を継承します。

-

子オブジェクトに設定された属性は、親オブジェクトに設定された同じ属性よりも優先されます。

1.5.2. LINSTORオブジェクト間の関係を示すための図を使用する

このセクションでは、最も頻繁に使用されるLINSTORオブジェクト間の階層関係を表す図を使用しています。LINSTORオブジェクトの数とそれらの相互関係のため、概念化を簡素化するために、1つの図ではなく複数の図を最初に表示しています。

次の図は、単一のサテライトノード上でのLINSTORグループオブジェクト間の関係を示しています。

前の2つの図は、一般的なLINSTORオブジェクト間の上位下位の関係を示していますが、LINSTORオブジェクトの中には親子関係を持つものも考えられます。次の図では、ストレージプールの定義(親オブジェクト)とストレージプール(子オブジェクト)を例に、この種の関係が紹介されています。親オブジェクトは複数の子オブジェクトを持つことができます。そのような関係を示す図が以下に示されています。

概念図で親子関係の概念を導入した後、次の図は、グループや定義に一部の関係を追加した第2図の修正版です。この修正図には、最初の図で示された上位-下位の関係も一部取り入れられています。

次のダイアグラムは、以前のダイアグラムの関係性の概念を統合しつつ、スナップショットや接続に関連する新しいLINSTORオブジェクトを紹介しています。さまざまなオブジェクトと交差する線があるため、このダイアグラムへの構築の理由は明らかになるはずです。

前述の図は複雑なように見えますが、それでも簡略化されたものであり、LINSTORオブジェクト間の可能な関係のすべてを網羅しているわけではありません。前述したように、図に示されている以上に多くのLINSTORオブジェクトが存在します。[LINSTORは進化するソフトウェアであるため、特定のユースケースやコンテキストにおいては、オブジェクトのプロパティ階層が一般的なルールと異なることがある可能性があります。これらの場合には、文書で一般ルールへの例外に言及されます。]。

良いニュースは、LINSTORを扱う際に先行する図を暗記する必要がないということです。ただし、LINSTORオブジェクトに設定した属性、およびその他のLINSTORオブジェクトに対する継承と効果をトラブルシューティングしようとしている場合には参照すると役立つかもしれません。

LINSTORクラスターの管理

2. 基本管理タスクとシステム設定

これは、基本的なLINSTOR®管理タスクについて説明する「ハウツー」スタイルの章で、LINSTORのインストール方法やLINSTORの使用を始める方法がカバーされています。

2.1. LINSTORをインストールする前に

LINSTORをインストールする前に、LINSTORのインストール方法に影響を与える可能性があるいくつかのことを認識しておく必要があります。

2.1.1. パッケージ

LINSTOR は RPM と DEB 形式でパッケージングされています。

-

linstor-clientにはコマンドラインクライアントプログラムが含まれています。通常、これはすでにインストールされているPythonに依存しています。RHEL 8システムでは、pythonへのシンボリックリンクが必要になります。 -

linstor-controllerとlinstor-satelliteは、サービスのためのsystemdユニットファイルを含んでいます。これらは、Java実行環境(JRE)バージョン1.8(headless)以上に依存しています。

これらのパッケージについての詳細は インストール可能なコンポーネント を参照ください。

| LINBIT(R)サポート契約をお持ちであれば、LINBIT顧客専用リポジトリを通じて認定されたバイナリにアクセスできます。 |

2.1.2. FIPSコンプライアンス

この標準は、暗号モジュールの設計および実装に使用されます。 — NIST’s FIPS 140-3 publication

LINSTORを設定して、このユーザーガイドの Encrypted Volumes セクションに詳細が記載されているように、LUKS (dm-crypt) を使用してストレージボリュームを暗号化することができます。 FIPS 規格に準拠しているかどうかについては、LUKSと dm-crypt プロジェクトを参照してください。

LINSTORを構成して、LINSTORサテライトとLINSTORコントローラー間の通信トラフィックをSSL/TLSを使用して暗号化することもできます。このユーザーガイドの 安全なサテライト接続 セクションで詳細に説明されています。

LINSTOR can also interface with Self-Encrypting Drives (SEDs) and you can use LINSTOR to initialize an SED drive. LINSTOR stores the password of an SED as a property that applies to the storage pool associated with the drive. LINSTOR encrypts the SED drive password by using the LINSTOR master passphrase that you must create first.

デフォルトでは、LINSTOR は以下の暗号アルゴリズムを使用します:

-

HMAC-SHA2-512

-

PBKDF2

-

AES-128

このセクションで述べられているユースケース向けに、FIPS準拠バージョンのLINSTORが利用可能です。もし貴方や貴組織がこのレベルのFIPSコンプライアンスを必要とする場合は、詳細について[email protected]までお問い合わせください。

2.2. インストール

| コンテナで LINSTOR を使用する場合は、このセクションをスキップして、以下の Containers セクションを使用してインストールしてください。 |

2.2.1. ボリュームマネージャーのインストール

LINSTOR を使用してストレージボリュームを作成するには、LVM または ZFS のいずれかのボリュームマネージャーをシステムにインストールする必要があります。

2.2.2. LINBIT クラスターノードを管理するスクリプトの使用

LINBIT®のお客様であれば、LINBITが作成したヘルパースクリプトをダウンロードしてノード上で実行することができます。

-

クラスターノードをLINBITに登録する。

-

ノードを既存の LINBIT クラスターに参加させる。

-

ノードで LINBIT パッケージ リポジトリを有効にする。

LINBIT パッケージリポジトリを有効にすると、LINBIT ソフトウェアパッケージ、DRBD® カーネルモジュール、およびクラスタマネージャや OCF スクリプトなどの関連ソフトウェアにアクセスできるようになります。その後、パッケージマネージャを使用して、パッケージの取得、インストール、および更新済みパッケージの管理を行うことができます。

LINBIT Manage Node スクリプトをダウンロードします。

LINBITにクラスターノードを登録し、LINBITのリポジトリを構成するには、まず全てのクラスターノードで以下のコマンドを入力して、ノード管理ヘルパースクリプトをダウンロードして実行してください。

# curl -O https://my.linbit.com/linbit-manage-node.py # chmod +x ./linbit-manage-node.py # ./linbit-manage-node.py

root ユーザーとしてスクリプトを実行する必要があります。

|

このスクリプトは、 LINBIT カスタマーポータル のユーザー名とパスワードの入力を求めます。資格情報を入力すると、スクリプトはアカウントに関連付けられたクラスターノードを一覧表示します (最初は何もありません)。

LINBIT パッケージ リポジトリの有効化

ノードを登録するクラスターを指定した後、プロンプトが表示されたら、スクリプトで登録データを JSON ファイルに書き込みます。次に、スクリプトは、有効または無効にできる LINBIT リポジトリのリストを表示します。 LINSTOR およびその他の関連パッケージは、drbd-9 リポジトリにあります。別の DRBD バージョンブランチを使用する必要がない限り、少なくともこのリポジトリを有効にする必要があります。

ノード管理スクリプト内の最終タスク

リポジトリの選択が完了したら、スクリプトのプロンプトに従って構成をファイルに書き込むことができます。次に、LINBIT の公開署名鍵をノードのキーリングにインストールすることに関する質問には必ず yes と答えてください。

スクリプトが終了する前に、異なるユースケースに応じてインストールできるさまざまなパッケージを提案するメッセージが表示されます。

DEBベースのシステムでは、事前にコンパイルされたDRBDカーネルモジュールパッケージである drbd-module-$(uname -r) 、またはカーネルモジュールのソースバージョンである drbd-dkms をインストールすることができます。どちらか一方のパッケージをインストールしてくださいが、両方をインストールしないでください。

|

2.2.3. パッケージマネージャーを使用して LINSTOR をインストール

ノードを登録し drbd-9 LINBIT パッケージリポジトリを有効にすると、DEB、RPM、または YaST2 ベースのパッケージマネージャーを使用して LINSTOR と関連コンポーネントをインストールできます。

DEB ベースのパッケージマネージャーを使用している場合は、続行する前に apt update と入力してパッケージリポジトリリストを更新します。

|

LINSTOR ストレージ用の DRBD パッケージのインストール

| もし DRBDを使用せずにLINSTORを使用する 予定であるなら、これらのパッケージのインストールをスキップすることができます。 |

LINSTOR を使用して DRBD 複製ストレージを作成できるようにする場合は、必要な DRBD パッケージをインストールする必要があります。ノードで実行している Linux ディストリビューションに応じて、ヘルパースクリプトが提案する DRBD 関連のパッケージをインストールします。スクリプトの推奨パッケージとインストールコマンドを確認する必要がある場合は、次のように入力できます。

# ./linbit-manage-node.py --hints

2.2.4. ソースコードから LINSTOR をインストール

LINSTOR プロジェクトの GitHub ページは https://github.com/LINBIT/linstor-server です。

LINBIT には、LINSTOR、DRBD などのソースコードのダウンロード可能なアーカイブファイルもあります。 https://linbit.com/linbit-software-download-page-for-linstor-and-drbd-linux-driver/ から利用可能です。

2.3. LINSTOR のアップグレード

LINSTORはローリングアップグレードをサポートしていません。クラスター内で稼働するLINSTORのコントローラーとサテライトサービスは、同一のバージョンである必要があります。そうでない場合、コントローラーは VERSION_MISMATCH としてそのサテライトを切断します。これは修正が必要な状況ですが、データにリスクが及ぶことはありません。サテライトノードはコントローラーノードに接続されていない限り、いかなるアクションも実行しません。また、LINSTORはデータプレーンとコントロールプレーンが分離されているため、この状態がDRBDのデータレプリケーションを妨げることはありません。

2.3.1. アップグレード前のコントローラーデータベースのバックアップ

LINSTORをアップグレードする前に、LINSTORコントローラーのデータベースをバックアップしてください。デフォルトの組み込みH2データベースを使用している場合、linstor-controller`パッケージをアップグレードすると、データベースの自動バックアップファイルがデフォルトの/var/lib/linstor`ディレクトリに作成されます。`linstor-controller`のデータベース移行に失敗した際、このファイルは有効な復元ポイントになります。その場合はLINBITにエラーを報告し、古いデータベースファイルを復元して、以前のコントローラーバージョンにロールバックすることをお勧めします。

外部データベースまたはetcdを使用している場合は、LINSTORをアップグレードする前に現在のデータベースの手動バックアップを作成してください。必要に応じて、このバックアップをリストアポイントとして使用できます。

2.3.2. LINSTOR のアップグレード

RPMベースのLinuxディストリビューションでは、LINSTORパッケージのアップグレード時にLINSTORサービスが自動的に再起動されることはありません。そのため、アップグレードに伴うLINSTORサービス(LINSTORコントロールプレーン)のダウンタイムは、他のシステムほど発生しません。

すべてのノードで、該当するLINSTORパッケージを更新してください。LINSTORクラスター内での各ノードの役割に応じたパッケージのみが更新されるよう、以下のコマンドを適宜変更してください。

dnf update -y linstor-client linstor-controller linstor-satellite

全ノードでLINSTORパッケージをアップデートした後、すべてのサテライトノードでLINSTORサテライトサービスを可能な限り同時に再起動してください。ただし、現在稼働中のコントローラーノードが「コンバインド(統合)」ロールである場合は、そのノードを除いてください。

systemctl restart linstor-satellite

次に、サービスがアクティブに稼働しているノードでLINSTORコントローラーサービスを再起動します。そのノードのロールが「combined(混合)」である場合は、LINSTORサテライトサービスも更新してください。以下に例を示します。

systemctl restart linstor-satellite linstor-controller

| クラスター内でLINSTORコントローラーサービスを高可用化している場合は、候補となるすべてのLINSTORコントローラーノードで`linstor-controller`パッケージを更新する必要があります。ただし、LINSTORコントローラーサービスを再起動する必要があるのは、現在サービスが稼働しているノードのみです。 |

2.3.3. DEBベースのLinuxでのLINSTORのアップグレード

DEBベースのLinuxシステムでは、アップデート版のインストール後にLINSTORサービスが自動的に再起動されるため、RPMベースのシステムよりも、LINSTORコントロールプレーンのダウンタイムを長く見積もる必要があります。

まず、アクティブなLINSTORコントローラーノード上でLINSTORコントローラーソフトウェアをアップグレードしてください。

apt install -y --only-upgrade linstor-controller

| クラスター内でLINSTORコントローラーサービスを高可用化している場合は、LINSTORコントローラーの候補となるすべてのノードで`linstor-controller`パッケージを更新する必要があります。 |

ソフトウェアをアップグレードすると、コントローラーサービスが自動的に再起動します。この時点で`linstor node list`コマンドを実行すると、サテライトノードの状態が`OFFLINE(VERSION MISMATCH)`と表示されます。

次に、LINSTORのクライアントおよびサテライトのパッケージがインストールされているノードで、それらのパッケージを更新してください。LINSTORクラスターにおける各ノードの役割に合わせて、以下のコマンドを修正し、該当するパッケージのみを更新するようにしてください。

apt install -y --only-upgrade linstor-client linstor-satellite

| Upgrading the LINSTOR satellite service software package on your satellite (and combined) nodes will automatically restart the LINSTOR satellite service on those nodes. |

2.3.4. LINSTORノードタイプの指定

クラスター内の各ノードに適用されるすべてのLINSTORパッケージをアップグレードした後、次の点を確認してください:

-

LINSTORクライアントを実行しているすべてのノードにおいて、

linstor --versionコマンドを実行すると、期待されるLINSTORクライアントのソフトウェアバージョン番号が表示されます。 -

linstor controller versionコマンドの出力には、期待される LINSTOR コントローラーのソフトウェアバージョンが表示されます。LINSTOR コントローラーサービスを高可用性化(HA構成)している場合は、パッケージマネージャーの照会コマンド(dnf info linstor-controllerまたはapt show linstor-controller)を使用して、コントローラー候補となる各ノードにインストールされているソフトウェアバージョンを確認する必要があります。 -

linstor node listコマンドの出力には、すべての LINSTOR サテライトノードがOnline状態であることが表示されます。インストールされている LINSTOR サテライトのソフトウェアバージョンがコントローラーのバージョンと一致しないノードがある場合、LINSTOR コントローラーサービスはversion mismatchというエラーメッセージをログ(journalctl -xeu linstor-controller)に出力します。その際、linstor node listコマンドの出力では、該当するノードはOFFLINE(VERSION MISMATCH)状態として表示されます。

2.4. コンテナ

LINSTORはコンテナとしても利用可能です。ベースイメージはLINBITのコンテナレジストリ drbd.io にあります。

| The LINBIT container image repository (https://drbd.io) is only available to LINBIT customers or through LINBIT customer trial accounts. Contact LINBIT for information on pricing or to begin a trial. Alternatively, you can use LINSTOR SDS’ upstream project named Piraeus, without being a LINBIT customer. |

画像にアクセスするには、まずLINBITカスタマーポータルの認証情報を使ってレジストリにログインする必要があります。

# docker login drbd.io

このリポジトリで利用可能なコンテナは以下です。

-

drbd.io/drbd9-rhel8

-

drbd.io/drbd9-rhel7

-

drbd.io/drbd9-sles15sp1

-

drbd.io/drbd9-bionic

-

drbd.io/drbd9-focal

-

drbd.io/linstor-csi

-

drbd.io/linstor-controller

-

drbd.io/linstor-satellite

-

drbd.io/linstor-client

You can find an up-to-date list of available images with versions at https://drbd.io.

カーネルモジュールをロードするには、LINSTORサテライトでのみ必要です。特権モードで drbd9-$dist コンテナを実行する必要があります。カーネルモジュールコンテナは、LINBITの公式パッケージを顧客リポジトリから取得したり、同梱されたパッケージを使用したり、カーネルモジュールをソースからビルドしようとしたりします。ソースからビルドする場合は、ホストに対応するカーネルヘッダ(たとえば kernel-devel )がインストールされている必要があります。このようなモジュールロードコンテナを実行する方法は4つあります:

-

出荷済みソースからビルドする。

-

出荷済み/ビルド済みのカーネルモジュールの使用する。

-

LINBITノードのハッシュとディストリビューションを指定する。

-

既存のリポジトリ設定をバインドマウントする。

ソースからビルドする場合(RHEL ベース):

# docker run -it --rm --privileged -v /lib/modules:/lib/modules \ -v /usr/src:/usr/src:ro \ drbd.io/drbd9-rhel7

コンテナに同梱されているモジュールを使用した例、 /usr/src は バインドマウントしません :

# docker run -it --rm --privileged -v /lib/modules:/lib/modules \ drbd.io/drbd9-rhel8

ハッシュとディストリビューションを指定する場合: (あまり使わない):

# docker run -it --rm --privileged -v /lib/modules:/lib/modules \ -e LB_DIST=rhel7.7 -e LB_HASH=ThisIsMyNodeHash \ drbd.io/drbd9-rhel7

既存のリポジトリ設定を使用した例(ほとんど使用されない):

# docker run -it --rm --privileged -v /lib/modules:/lib/modules \ -v /etc/yum.repos.d/linbit.repo:/etc/yum.repos.d/linbit.repo:ro \ drbd.io/drbd9-rhel7

| 両方の場合(ハッシュ+配布、およびリポジトリのバインドマウント)で、ハッシュまたはリポジトリの構成は、特別な属性が設定されたノードからである必要があります。この属性を設定するためには、LINBITのカスタマーサポートに連絡してください。 |

現時点(つまり、DRBD 9 バージョン “9.0.17” より前)では、ホストシステムにカーネルモジュールをロードするのではなく、コンテナ化されたDRBDカーネルモジュールを使用する必要があります。コンテナを使用する場合は、ホストシステムにDRBDカーネルモジュールをインストールしないでください。DRBDバージョン9.0.17以上の場合は、通常通りホストシステムにカーネルモジュールをインストールできますが、usermode_helper=disabled パラメータでモジュールをロードする必要があります(例: modprobe drbd usermode_helper=disabled)。

|

次に、デーモンとして特権モードで LINSTOR サテライトコンテナを実行します。

# docker run -d --name=linstor-satellite --net=host -v /dev:/dev \ --privileged drbd.io/linstor-satellite

コンテナ化された drbd-utils がネットワークを通してホストカーネルと通信できるようにするには net=host が必要です。

|

LINSTOR コントローラコンテナをデーモンとして実行するには、ホスト上のポート 3370, 3376, 3377 をコンテナにマッピングします。

# docker run -d --name=linstor-controller -p 3370:3370 drbd.io/linstor-controller

コンテナ化された LINSTOR クラスタと対話するには、パッケージを介してシステムにインストールされた LINSTOR クライアント、またはコンテナ化された LINSTOR クライアントを使用することができます。 LINSTOR クライアントコンテナを使用するには

# docker run -it --rm -e LS_CONTROLLERS=<controller-host-IP-address> \ drbd.io/linstor-client node list

これ以降は、LINSTOR クライアントを使用してクラスタを初期化し、一般的な LINSTOR パターンを使用してリソースの作成を開始します。

デーモン化されたコンテナとイメージを停止して削除します。

# docker stop linstor-controller # docker rm linstor-controller

2.5. クラスタの初期化

LINSTORクラスタを初期化する前に、 すべて のクラスタノードで以下の前提条件を満たす必要があります。

-

DRBD9カーネルモジュールがインストールされ、ロードされている。

-

drbd-utilsパッケージがインストールされています。 -

LVMツールがインストールされている。 -

linstor-controllerまたはlinstor-satelliteパッケージとそれに必要な依存関係が適切なノードにインストールされています。 -

linstor-clientはlinstor-controllerノードにインストールされている。

コントローラがインストールされているホストで linstor-controller サービスを起動して有効にします。

# systemctl enable --now linstor-controller

2.6. LINSTORクライアントの使用

LINSTORコマンドラインクライアントを実行する際には、linstor-controller サービスが実行されているクラスターノードを指定する必要があります。これを指定しない場合、クライアントはIPアドレス 127.0.0.1 、ポート 3370 で待機しているローカルで実行されている linstor-controller サービスにアクセスしようとします。そのため、 linstor-controller と同じホスト上で linstor-client を使用してください。

linstor-satellite.サービスにはTCPポート3366と3367が必要です。linstor-controller.サービスにはTCPポート3370が必要です。ファイアウォールでこれらのポートが許可されていることを確認してください。

|

# linstor node list

このコマンドの出力は、空のリストが表示されるべきであり、エラーメッセージではありません。

linstor.コマンドを他のマシンでも使用することができますが、その際にはクライアントに LINSTOR コントローラの場所を教える必要があります。これは、コマンドラインオプションとして指定するか、環境変数を使用することで指定できます。

# linstor --controllers=alice node list # LS_CONTROLLERS=alice linstor node list

もし、 LINSTORコントローラのREST APIへのHTTPSアクセス を設定しており、LIINSTORクライアントがコントローラにHTTPS経由でアクセスする場合は、以下の構文を使用する必要があります:

# linstor --controllers linstor+ssl://<controller-node-name-or-ip-address> # LS_CONTROLLERS=linstor+ssl://<controller-node-name-or-ip-address> linstor node list

2.6.1. LINSTORの構成ファイルでコントローラを指定する

あるいは、/etc/linstor/linstor-client.conf ファイルを作成し、global セクションに controllers= 行を追加することもできます。

[global] controllers=alice

複数の LINSTOR コントローラーが構成されている場合は、それらをカンマ区切りのリストで指定するだけです。 LINSTOR クライアントは、リストされた順番でそれらを試します。

2.6.2. LINSTOR クライアントの省略記法を使用する

LINSTORのクライアントコマンドは、コマンドやサブコマンド、パラメータの最初の文字だけを入力することで、より速く便利に使用することができます。例えば、 linstor node list を入力する代わりに、LINSTORの短縮コマンド linstor n l を入力することができます。

linstor commands コマンドを入力すると、それぞれのコマンドの略記法と共に可能な LINSTOR クライアントコマンドのリストが表示されます。これらの LINSTOR クライアントコマンドのいずれかに --help フラグを使用すると、コマンドのサブコマンドの略記法を取得できます。

2.7. ノードをクラスタに追加する

LINSTORクラスターを初期化した後、次のステップはクラスターにノードを追加することです。

# linstor node create bravo 10.43.70.3

IPアドレスを省略した場合、前述の例で bravo と指定されたノード名を LINSTOR クライアントがホスト名として解決しようと試みます。ホスト名が、LINSTOR コントローラーサービスが実行されているシステムからのネットワーク上のホストに解決されない場合、ノードの作成を試みた際に LINSTOR はエラーメッセージを表示します。

Unable to resolve ip address for 'bravo': [Errno -3] Temporary failure in name resolution

2.8. クラスター内のノードをリストアップします。

以下のコマンドを入力して、追加したノードを含むLINSTORクラスターのノードをリストアップできます:

# linstor node list

コマンドの出力には、LINSTORクラスター内のノードが一覧表示され、ノードに割り当てられたノードタイプ、ノードのIPアドレスとLINSTOR通信に使用されるポート、およびノードの状態などの情報が表示されます。

╭────────────────────────────────────────────────────────────╮ ┊ Node ┊ NodeType ┊ Addresses ┊ State ┊ ╞════════════════════════════════════════════════════════════╡ ┊ bravo ┊ SATELLITE ┊ 10.43.70.3:3366 (PLAIN) ┊ Offline ┊ ╰────────────────────────────────────────────────────────────╯

2.8.1. LINSTORノードの命名

By specifying an IP address when you create a LINSTOR node, LINSTOR will not need to resolve the hostname of the node and you can give the node an arbitrary name. The LINSTOR client will show an INFO message about this when you create the node:

[...] 'arbitrary-name' and hostname 'node-1' doesn't match.

LINSTORは自動的に作成されたノードの uname --nodename を検出し、後でDRBDリソースの構成に使用します。任意のノード名の代わりに、ほとんどの場合は自分自身や他の人を混乱させないよう、LINSTORノードを作成する際にはノードのホスト名を使用するのが適切です。

2.8.2. LINSTORサテライトノードの起動と有効化

linstor node list コマンドを使用すると、LINSTOR は新しいノードがオフラインとしてマークされていることを表示します。その後、新しいノードで LINSTOR サテライトサービスを開始して有効にし、サービスが再起動時にも起動するようにしてください。

# systemctl enable --now linstor-satellite

トラブルシューティングやLINSTORのアップグレード時を除き、通常はサテライトサービスを開始または停止する必要はありません。しかし、UUIDの不一致など、一部の致命的なエラーが発生した場合には、これらの操作が必要になることがあります。linstor-server バージョン 1.31.3 以降では、LINSTORが致命的なエラーに遭遇した場合、終了コード 70 でシャットダウンすることで自動的に復旧を試みます。これにより、linstor-satellite.service ユニットファイルの設定に基づいて、systemdがLINSTORサテライトサービスを自動的に再起動します。

|

サテライトサービスを起動して有効化すると、約10秒後に linstor node list のステータスが「online」と表示されるようになります。もちろん、コントローラーがサテライトノードの存在を認識する前に、サテライトプロセスが開始されていることもあります。

コントローラをホストしているノードが LINSTOR クラスタにストレージを提供する必要がある場合、そのノードをノードとして追加し、linstor-satellite サービスも開始する必要があります。

|

2.8.3. LINSTORノードタイプの指定

LINSTORノードを作成する際に、ノードタイプを指定することもできます。ノードタイプは、あなたのLINSTORクラスタ内でノードが担う役割を示すラベルです。ノードタイプは、controller, auxiliary, combined, satellite のいずれかになります。例えば、LINSTORノードを作成して、それをコントローラーノードとサテライトノードとしてラベルを付ける場合は、次のコマンドを入力してください:

# linstor node create bravo 10.43.70.3 --node-type combined

--node-type 引数はオプションです。ノードを作成する際にノードタイプを指定しない場合、LINSTORは自動的にデフォルトのサテライトタイプを使用します。

ノードを作成した後で、割り当てられた LINSTOR ノードのタイプを変更したい場合は、linstor node modify --node-type コマンドを入力します。

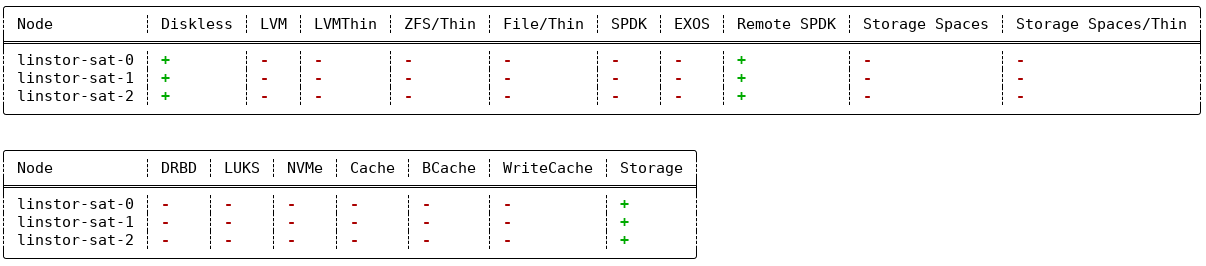

2.8.4. サポートされているストレージプロバイダーとストレージレイヤーの一覧

サテライトノードでサポートされているストレージプロバイダやストレージレイヤをリストアップするには、node info コマンドを使用することができます。クラスタ内でストレージオブジェクトを作成する前やトラブルシューティングの状況にある場合は、LINSTORを使用する前にそれを行ってください。

# linstor node info

コマンドの出力には2つのテーブルが表示されます。最初のテーブルでは利用可能な LINSTOR storage provider バックエンドが表示されます。2番目のテーブルでは利用可能な LINSTOR storage layers が表示されます。両方のテーブルで、特定のノードがストレージプロバイダーまたはストレージレイヤーをサポートしているかどうかが示されます。プラス記号「+」は”サポートされています”を、マイナス記号「-」は”サポートされていません”を表します。

クラスターの例の出力は、以下のようなものに類似しているかもしれません。

linstor node info コマンドの例の出力2.9. ストレージプール

ストレージプール は、LINSTORのコンテキストでストレージを識別します。複数のノードからストレージプールをグループ化するには、単純に各ノードで同じ名前を使用します。例えば、すべてのSSDには1つの名前を、すべてのHDDには別の名前を指定するのが有効なアプローチです。

2.9.1. ストレージプールの作成

各ストレージを提供するホスト上で、LVMのボリュームグループ(VG)またはZFSのzPoolを作成する必要があります。同じLINSTORストレージプール名で識別されるVGやzPoolは、各ホストで異なるVGやzPool名を持つかもしれませんが、整合性を保つために、すべてのノードで同じVGまたはzPool名を使用することをお勧めします。

|

LINSTOR を LVM および DRBD と一緒に使用する場合、LVM グローバル設定ファイル (RHEL では global_filter = [ "r|^/dev/drbd|", "r|^/dev/mapper/[lL]instor|" ] This setting tells LVM to reject DRBD and other devices created or set up by LINSTOR from operations such as scanning or opening attempts. In some cases, not setting this filter might lead to increased CPU load or stuck LVM operations. |

# vgcreate vg_ssd /dev/nvme0n1 /dev/nvme1n1 [...]

各ノードにボリュームグループを作成した後、次のコマンドを入力することで、各ノードでボリュームグループにバックアップされたストレージプールを作成することができます。

# linstor storage-pool create lvm alpha pool_ssd vg_ssd # linstor storage-pool create lvm bravo pool_ssd vg_ssd

ストレージプールの一覧を表示するには、次のコマンドを入力してください:

# linstor storage-pool list

または、LINSTORの略記法を使用することもできます。

# linstor sp l

2.9.2. ストレージプールを使用して、障害領域を単一のバックエンドデバイスに制限する

単一種類のストレージのみが存在し、ストレージデバイスのホットスワップが可能なクラスターでは、1つの物理バッキングデバイスごとに1つのストレージプールを作成するモデルを選択することがあります。このモデルの利点は、障害ドメインを1つのストレージデバイスに限定することです。

2.9.3. 複数のノードでストレージプールを共有する

LVM2ストレージプロバイダーは、複数のサーバーノードをストレージアレイやドライブに直接接続する構成を提供しています。2つ以上のノードからアクセス可能な同一のLVM2ボリュームグループを使用するようにLINSTORプールを構成する場合、LINSTORの`storage-pool create`コマンドで`–shared-space`オプションを使用できます。

| LINSTORストレージプールのバックエンドとして使用されているボリュームグループ内で論理ボリュームを変更または作成すべきではないのと同様に、共有ボリュームグループに対してこれらの操作を行わないことはさらに重要です。LINSTORによってLVMコマンドの調整が行われている共有ボリュームグループに対して、例えばインタラクティブシェルからLVMコマンドを実行すると、不整合な情報が出力されたり、LVMメタデータが破壊されたりする恐れがあります。起動時などにおけるLVM論理ボリュームの自動アクティブ化も、この警告の対象となります。これらの操作は絶対に行わないでください。このような場合には、抽象化されたLINSTORオブジェクトを介して、LINSTORでLVM論理ボリュームを管理するようにしてください。 |

以下の例では、ノード alpha および bravo からアクセス可能なストレージプールの共有スペース識別子として、LVM2 ボリュームグループの UUID を使用する方法を示しています。

# linstor storage-pool create lvm --external-locking \ --shared-space O1btSy-UO1n-lOAo-4umW-ETZM-sxQD-qT4V87 \ alpha pool_ssd shared_vg_ssd # linstor storage-pool create lvm --external-locking \ --shared-space O1btSy-UO1n-lOAo-4umW-ETZM-sxQD-qT4V87 \ bravo pool_ssd shared_vg_ssd

この例では --external-locking オプションも使用しています。このオプションを指定することで、共有ストレージプールに対して LINSTOR 内部の排他制御機構を使用せず、代わりに LVM の機構を使用するように指示します。

LVM2では、外部ロックサービス(lvmlockd)が、vgcreate`コマンドの–shared`オプションを使用して作成されたボリュームグループを管理します。LINSTORの`–external-locking`オプションを使用するには、LINSTORストレージプールの基盤となるLVMボリュームグループを`vgcreate`コマンドで作成する際に、LVMの`–shared`オプションを指定しておく必要があります。

|

You can omit both the --shared option for vgcreate and the --external-locking option for linstor storage-pool create. If you do this, LINSTOR will use its own mechanism to take care that only one node in the same --shared-space grouping will use LVM. Using the LINSTOR internal mechanism to lock LVM use will not however limit the access to single volumes. Neither the LINSTOR nor the LVM locking mechanism, for example, will limit DRBD I/O.

2.9.4. 物理ストレージコマンドを使用してストレージプールを作成する

linstor-server 1.5.2および最新の linstor-client から、LINSTORはサテライト上でLVM/ZFSプールを作成することができます。 LINSTORクライアントには、可能なディスクをリストアップしストレージプールを作成するための以下のコマンドがありますが、このようなLVM/ZFSプールはLINSTORによって管理されず、削除コマンドもないため、そのような処理はノード上で手動で行う必要があります。

# linstor physical-storage list

これはサイズと回転方式 (SSD/磁気ディスク) でグループ化された使用可能なディスクのリストを表示します。

次のフィルターを通過するディスクのみが表示されます。

-

デバイスのサイズは1GiBより大きい必要があります。

-

そのデバイスはルートデバイス(子を持たない)、例えば、

/dev/vda,/dev/sdaです。 -

そのデバイスにはファイルシステムや他の

blkidマーカーがありません(wipefs -aが必要かもしれません)。 -

そのデバイスはDRBDデバイスではありません。

create-device-pool コマンドを使用すると、ディスクにLVMプールを作成し、それを直接ストレージプールとしてLINSTORに追加することができます。

# linstor physical-storage create-device-pool --pool-name lv_my_pool \ LVMTHIN node_alpha /dev/vdc --storage-pool newpool

もし --storage-pool オプションが指定された場合、LINSTOR は指定された名前でストレージプールを作成します。

さらなるオプションや正確なコマンドの使用方法については、LINSTOR クライアントの --help テキストを参照してください。

2.9.5. ストレージプールの混合

いくつかのセットアップと構成を行うことで、異なる ストレージプロバイダ タイプのストレージプールを使用して LINSTOR リソースをバックアップすることができます。これはストレージプールのミキシングと呼ばれます。たとえば、1つのノードで LVM の厚み予約ボリュームを使用するストレージプールを持ち、別のノードで ZFS zpool を使用するスリム予約済みのストレージプールを持つことができます。

ほとんどの LINSTOR デプロイメントでは、リソースをバックアップするために均質なストレージプールが使用されるため、ストレージプールのミキシングはその機能が存在することを知っておくためにだけここで言及されています。 例えば、ストレージリソースを移行する際に便利な機能かもしれません。 異なるストレージプロバイダーのストレージプールを混在させる には、これに関する詳細や前提条件などが記載されていますので、そちらをご覧ください。

2.10. リソースグループを使用した LINSTOR プロビジョニングされたボリュームのデプロイ

LINSTOR によってプロビジョニングされたボリュームを展開する際に、リソースグループを使用してリソースのプロビジョニング方法を定義することは、デファクトスタンダードと考えられるべきです。その後の章では、特別なシナリオでのみ使用すべきである、リソース定義とボリューム定義から各々のリソースを作成する方法について説明しています。

| LINSTORクラスターでリソースグループを作成および使用しない場合でも、リソース定義およびボリューム定義から作成されたすべてのリソースは、’DfltRscGrp’リソースグループに存在します。 |

リソースグループを使用してリソースをデプロイする簡単なパターンは次のようになります。

# linstor resource-group create my_ssd_group --storage-pool pool_ssd --place-count 2 # linstor volume-group create my_ssd_group # linstor resource-group spawn-resources my_ssd_group my_ssd_res 20G

上記のコマンドを実行すると ‘pool_ssd’ という名前のストレージプールに参加するノードから、2つに複製された 20GB ボリュームを持つ ‘my_ssd_res’ という名前のリソースが自動的にプロビジョニングされます。

より有効なパターンは、ユースケースが最適であると判断した設定でリソースグループを作成することです。もし一貫性のためボリュームの夜間オンライン検証を実行する必要があるというケースの場合、’verify-alg’ がすでに設定されたリソースグループを作成することで、グループから生成されるリソースは ‘verify-alg’ が事前設定されます。

# linstor resource-group create my_verify_group --storage-pool pool_ssd --place-count 2

# linstor resource-group drbd-options --verify-alg crc32c my_verify_group

# linstor volume-group create my_verify_group

# for i in {00..19}; do

linstor resource-group spawn-resources my_verify_group res$i 10G

done

上記のコマンドは、各々が crc32c の verify-alg が事前に設定された20個の 10GiB リソースが作成される結果となります。

Promoterは、リソースグループから生成された個々のリソースやボリュームの設定を調整することができます。これは、対応するリソース定義やボリューム定義のLINSTORオブジェクトにオプションを設定することで行います。たとえば、前述の例から res11 が、多くの小さなランダムライトを受け取る非常にアクティブなデータベースで使用されている場合、その特定のリソースの al-extents を増やしたい場合があります。

# linstor resource-definition drbd-options --al-extents 6007 res11

リソース定義内で設定を構成すると、その設定が生成元のリソースグループで既に構成されている場合、リソース定義で設定された値が親リソースグループで設定された値を上書きします。たとえば、同じ res11 が、その検証により遅いがより安全な sha256 ハッシュアルゴリズムを使用する必要がある場合、res11 のリソース定義で verify-alg を設定すると、リソースグループで設定された値が上書きされます。

# linstor resource-definition drbd-options --verify-alg sha256 res11

| リソースやボリュームに設定が継承される階層における基本ルールは、リソースやボリュームに「近い」値が優先されます。ボリュームの定義設定はボリュームグループの設定より優先され、リソースの定義設定はリソースグループの設定より優先されます。 |

2.12. リソース、ボリュームの作成と配備

LINSTORの create コマンドを使用すると、リソースの定義、ボリュームの定義、およびリソースなど、さまざまなLINSTORオブジェクトを作成できます。以下にいくつかのこれらのコマンドが示されています。

次の例のシナリオでは、backups という名前のリソースを作成し、そのサイズを500GiBに設定し、3つのクラスタノード間で複製することを目標とします。

まず、新しいリソース定義を作成してください。

# linstor resource-definition create backups

次に、そのリソース定義内に新しいボリューム定義を作成してください。

# linstor volume-definition create backups 500G

もしボリューム定義をリサイズ(拡大または縮小)したい場合は、set-size コマンドで新しいサイズを指定することで行うことができます。

# linstor volume-definition set-size backups 0 100G

| バージョン1.8.0以降、LINSTORはボリューム定義サイズの縮小をサポートしています。リソースのストレージレイヤーが対応していれば、デプロイ済みのリソースであっても縮小が可能です。データが存在するリソースのボリューム定義サイズを縮小する際は、細心の注意を払ってください。バックアップの作成や、事前にボリューム上のファイルシステムを縮小するなどの予防措置を講じない場合、データ消失が発生する恐れがあります。 |

パラメータ 0 はリソース backups におけるボリュームの番号です。このパラメータを指定する必要があります。それは、リソースには複数のボリュームが存在し、それらはいわゆる「ボリューム番号」で識別されるからです。この番号は、ボリューム定義の一覧 (linstor vd l) を取得して見つけることができます。一覧テーブルには、リソースのボリュームに対する番号が表示されます。

So far you have only created definition objects in the LINSTOR database. However, not a single logical volume (LV) has been created on the satellite nodes. Now you have the choice of delegating the task of deploying resources to LINSTOR or else doing it yourself.

2.12.1. リソースを手動で配置する

resource create コマンドを使用すると、リソース定義を名前付きノードに明示的に割り当てることができます。

# linstor resource create alpha backups --storage-pool pool_hdd # linstor resource create bravo backups --storage-pool pool_hdd # linstor resource create charlie backups --storage-pool pool_hdd

2.12.2. DRBD リソースの自動化

リソースグループからリソースを作成(生成)する際、LINSTOR が自動的にノードとストレージプールを選択してリソースを展開することが可能です。このセクションで言及されている引数を使用して、リソースグループを作成または変更する際に制約を指定することができます。これらの制約は、リソースグループから展開されるリソースが自動的に配置される方法に影響を与えます。

リソースグループ配置数の自動管理

Starting with LINSTOR version 1.26.0, there is a recurring LINSTOR task that tries to maintain the placement count set on a resource group for all deployed LINSTOR resources that belong to that resource group. This includes the default LINSTOR resource group and its placement count.

もし、この挙動を無効にしたい場合は、LINSTOR コントローラー、リソースが属する LINSTOR リソースグループ、またはリソース定義それ自体にある BalanceResourcesEnabled プロパティを false に設定してください。LINSTOR のオブジェクト階層のため、リソースグループにプロパティを設定した場合は、それが LINSTOR コントローラーのプロパティ値を上書きします。同様に、リソース定義にプロパティを設定した場合は、そのリソース定義が属するリソースグループのプロパティ値を上書きします。

この機能に関連する他の追加プロパティも、LINSTORコントローラで設定できます:

BalanceResourcesInterval-

デフォルトでは、バランスリソース配置タスクがトリガーされるLINSTORコントローラーレベルの間隔は、3600秒(1時間)です。

BalanceResourcesGracePeriod-

新しいリソース(作成または生成後)がバランス調整から無視される秒数。デフォルトでは、寛容期間は3600秒(1時間)です。

Placement count

リソースグループを作成または変更する際に --place-count <replica_count> 引数を使用することで、クラスタ内の何ノードに LINSTOR がリソースグループから作成されたディスクリソースを配置するかを指定できます。

|

不可能な配置制約を持つリソースグループを作成する

リソースグループを作成または変更し、LINSTORが満たすことが不可能な配置数やその他の制約を指定することができます。たとえば、クラスターに3つのノードしかないのに配置数を ERROR:

Description:

Not enough available nodes

[...]

|

ストレージプール配置

次の例では、--place-count オプションの後の値は、いくつのレプリカを持ちたいかを LINSTOR に伝えます。--storage-pool オプションは明らかであるべきです。

# linstor resource-group create backups --place-count 3 --storage-pool pool_hdd

明らかでないことは、--storage-pool オプションを省略することができるということです。これを行うと、リソースグループからリソースを作成(生成)する際に、LINSTORは独自にストレージプールを選択することができます。選択は以下のルールに従います:

-

現在のユーザがアクセスできないすべてのノードとストレージプールは無視する。

-

すべてのディスクレスストレージプールは無視する

-

十分な空き領域がないストレージプールは無視する

残りのストレージプールは異なる戦略で評価されます。

MaxFreeSpace-

この戦略は評価を1:1でストレージプールの残りの空き容量に割り当てます。ただし、この戦略は実際に割り当てられた空間のみを考慮しています(スリムプロビジョニングされたストレージプールの場合、新しいリソースを作成せずに時間と共に増える可能性があります)。

MinReservedSpace-

「MaxFreeSpace」とは異なり、この戦略では予約空間が考慮されます。つまり、薄いボリュームが限界に達する前に成長できる空間です。予約空間の合計がストレージプールの容量を超える場合があり、これはオーバープロビジョニングと呼ばれます。

MinRscCount-

与えられたストレージプールに既に展開されているリソースの数を単純に数える

MaxThroughput-

この戦略では、ストレージプールの

Autoplacer/MaxThroughputプロパティがスコアの基準となります。ただし、このプロパティが存在しない場合は、スコアは 0 になります。与えられたストレージプールに展開されたすべてのボリュームは、定義されたsys/fs/blkio_throttle_readおよびsys/fs/blkio_throttle_writeプロパティの値を、ストレージプールの最大スループットから引きます。計算されたスコアは負の値になる可能性があります。

各方針のスコアは正規化され、重み付けされ、合計されます。この場合、最小化方針のスコアが最初に変換され、結果として得られるスコアの全体的な最大化が可能になります。

指定したストレージプールを明示しないリソースの作成時に、どのストレージプールを LINSTOR がリソース配置用に選択するかを影響させるために、戦略の重みを設定することができます。これは、LINSTOR コントローラーオブジェクトに以下のプロパティを設定することで行います。重みは任意の10進数値にすることができます。

linstor controller set-property Autoplacer/Weights/MaxFreeSpace <weight> linstor controller set-property Autoplacer/Weights/MinReservedSpace <weight> linstor controller set-property Autoplacer/Weights/MinRscCount <weight> linstor controller set-property Autoplacer/Weights/MaxThroughput <weight>

Autoplacerの動作を以前のLINSTORバージョンと互換性を保つために、重みが 1 の MaxFreeSpace を除いて、すべてのストラテジーのデフォルトの重みは0です。

|

| 0点でもマイナス点でも、ストレージプールが選択されるのを妨げることはありません。これらのスコアを持つストレージプールは、後で検討されるだけです。 |

最後に、LINSTORはすべての要件を満たすストレージプールのベストマッチングを見つけようとします。このステップでは、 --replicas-on-same, --replicas-on-different, --do-not-place-with, --do-not-place-with-regex, --layer-list, --providers などの自動配置に関する制限も考慮されます。

リソースを自動的に配置するときのリソースの併置の回避

--do-not-place-with <resource_name_to_avoid> 引数は、指定された resource_name_to_avoid リソースが展開されているノードにリソースを配置しないように、LINSTOR に指示することを示します。

--do-not-place-with-regex <regular_expression> 引数を使用することで、LINSTORに対して、提供された引数で指定した正規表現に一致する名前のリソースが既にデプロイされているノードにはリソースを配置しないように指定することができます。このようにして、配置を避けるために複数のリソースを指定することができます。

補助ノードプロパティを用いたリソース自動配置の制約付け

リソースの自動配置は、指定した補助ノードプロパティを持つノードにリソースを配置する(または配置しない)ように制約することができます。

この機能は、いくつかの異なるシナリオで役立ちます。その1つが、LINSTORで管理されたストレージを使用するKubernetes環境において、リソースの配置を制限したい場合です。例えば、Kubernetesのラベルに対応する補助的なノードプロパティを設定するといった使い方が考えられます。このユースケースの詳細については、『LINSTORユーザーガイド』の「KubernetesにおけるLINSTORボリューム」の章にある「replicasOnSame」セクションを参照してください。

--x-replicas-on-different 引数を使用すると、自動リソース配置に制約を加えることができます。これは、ストレッチクラスター構成などのように、LINSTORを使用して複数のデータセンターにわたるストレージリソースを管理する場合に便利です。この引数は --replicas-on-same や --replicas-on-different 引数とは指定形式が異なるため、詳細については後の専用セクション ディザスタリカバリに向けた、異なるノードへのリソース自動配置の実現 で解説します。

引数の --replicas-on-same と --replicas-on-different は、 Aux/ 名前空間内のプロパティの名前を指定します。

--x-replicas-on-different`コマンドオプションは、補助プロパティが設定されていないLINSTORオブジェクトも「異なる」ものとして扱います。例えば、5つのサテライトノードで構成されるクラスターにおいて、ノード1と2の補助プロパティ`site`の値が`dc1、ノード3と4の`site`の値が`dc2`であり、ノード5に補助プロパティが設定されていない場合、3つの「異なる」ノードグループが存在することになります。

|

次の例は、3つの LINSTOR サテライトノードに補助ノードプロパティ testProperty を設定しています。次に、配置カウントが2であり、testProperty の値が 1 であるノードにリソースを配置する制約を持つリソースグループ testRscGrp を作成します。ボリュームグループを作成した後、リソースグループからリソースを生成することができます。ここでは、次のコマンドの出力は簡潔に示されていません。

# for i in {0,2}; do linstor node set-property --aux node-$i testProperty 1; done

# linstor node set-property --aux node-1 testProperty 0

# linstor resource-group create testRscGrp --place-count 2 --replicas-on-same testProperty=1

# linstor volume-group create testRscGrp

# linstor resource-group spawn-resources testRscGrp testResource 100M

以下のコマンドで、生成されたリソースの配置を確認することができます。

# linstor resource list

コマンドの出力には、リソースの一覧と、LINSTORがリソースを配置したノードが表示されます。

╭─────────────────────────────────────────────────────────────────────────────────────╮ ┊ ResourceName ┊ Node ┊ Port ┊ Usage ┊ Conns ┊ State ┊ CreatedOn ┊ ╞═════════════════════════════════════════════════════════════════════════════════════╡ ┊ testResource ┊ node-0 ┊ 7000 ┊ Unused ┊ Ok ┊ UpToDate ┊ 2022-07-27 16:14:16 ┊ ┊ testResource ┊ node-2 ┊ 7000 ┊ Unused ┊ Ok ┊ UpToDate ┊ 2022-07-27 16:14:16 ┊ ╰─────────────────────────────────────────────────────────────────────────────────────╯

--replicas-on-same 制約により、LINSTORは作成されたリソースをサテライトノード node-1 に配置しませんでした。これは、補助ノードプロパティ testProperty の値が 1 ではなく 0 であったためです。

list-properties コマンドを使用して、node-1 のノードプロパティを確認できます。

# linstor node list-properties node-1 ╭────────────────────────────╮ ┊ Key ┊ Value ┊ ╞════════════════════════════╡ ┊ Aux/testProperty ┊ 0 ┊ ┊ CurStltConnName ┊ default ┊ ┊ NodeUname ┊ node-1 ┊ ╰────────────────────────────╯

オートプレースメントのプロパティを解除する

リソースグループに設定した自動配置プロパティを解除するには、次のコマンド構文を使用できます。

# linstor resource-group modify <resource-group-name> --<autoplacement-property>

あるいは、次のように --<autoplacement-property> 引数に続けて空の文字列を指定することもできます。

# linstor resource-group modify <resource-group-name> --<autoplacement-property> ''

例えば、前の例で設定した testRscGrp の --replicas-on-same 自動配置プロパティを解除するには、次のコマンドを入力します:

# linstor resource-group modify testRscGrp --replicas-on-same

ディザスタリカバリに向けた、異なるノードへのリソース自動配置の実現

各種LINSTORオブジェクトの作成または変更時に`–x-replicas-on-different`引数を使用することで、LINSTORがリソースを異なるLINSTORノードグループに自動的に配置するように設定できます。ここでは、補助プロパティの異なる値がそれぞれ異なるグループを表します。この引数は以下の形式をとります:

--x-replicas-on-different <auxiliary-property-name> <positive-integer>

この正の整数値は、指定された補助プロパティに同じ値を持つノードに対して、LINSTORが配置できるリソースレプリカの最大数です。

この機能の最も一般的なユースケースは、高可用性のために常に少なくとも2つのローカルリソースレプリカを確保し、災害復旧のために1つのオフサイトリソースレプリカを確保することです。例えば、物理的な場所によって各ノードにラベルを付けるために、ストレッチクラスター構成のLINSTORクラスターに対して、次のように補助プロパティ site を設定したと仮定します:

╭──────────────────────────────────────────────────────────────────╮ ┊ Node ┊ NodeType ┊ Addresses ┊ AuxProps ┊ State ┊ ╞══════════════════════════════════════════════════════════════════╡ ┊ linstor-ctrl-0 ┊ CONTROLLER ┊ [...] ┊ ┊ Online ┊ ┊ linstor-sat-0 ┊ SATELLITE ┊ [...] ┊ Aux/site=dc1 ┊ Online ┊ ┊ linstor-sat-1 ┊ SATELLITE ┊ [...] ┊ Aux/site=dc1 ┊ Online ┊ ┊ linstor-sat-2 ┊ SATELLITE ┊ [...] ┊ Aux/site=dc2 ┊ Online ┊ ┊ linstor-sat-3 ┊ SATELLITE ┊ [...] ┊ Aux/site=dc2 ┊ Online ┊ ┊ linstor-sat-4 ┊ SATELLITE ┊ [...] ┊ Aux/site=dc2 ┊ Online ┊ ╰──────────────────────────────────────────────────────────────────╯

さらに、5つすべてのサテライトノード上で、同じ LINSTOR ストレージプールに関連付けられたリソースグループ myrg を作成済みであると想定します。次のコマンドを入力して新しいリソース myres を作成し、LINSTOR が2つのレプリカを一方のサイトに、3つ目のレプリカを別の site に確実に配置するようにできます。

linstor resource-group spawn \ --x-replicas-on-different site 2 \ --place-count 3 \ myrg myres 200G

LINSTORがリソースを作成した後、linstor resource list --resource myres コマンドを実行すると、以下のような出力が表示されることがあります。

╭─────────────────────────────────────────────────────────────────────────────────────╮ ┊ ResourceName ┊ Node ┊ Layers ┊ Usage ┊ Conns ┊ State ┊ CreatedOn ┊ ╞═════════════════════════════════════════════════════════════════════════════════════╡ ┊ myres ┊ linstor-sat-0 ┊ DRBD,STORAGE ┊ Unused ┊ Ok ┊ UpToDate ┊ [...] ┊ ┊ myres ┊ linstor-sat-1 ┊ DRBD,STORAGE ┊ Unused ┊ Ok ┊ UpToDate ┊ [...] ┊ ┊ myres ┊ linstor-sat-2 ┊ DRBD,STORAGE ┊ Unused ┊ Ok ┊ UpToDate ┊ [...] ┊ ╰─────────────────────────────────────────────────────────────────────────────────────╯

myres リソースを作成する際、LINSTOR が dc1 または dc2 ノードのいずれかに配置できるリソースレプリカの最大数に 2 を指定し、さらにプレイスカウントに 3 を指定することで、LINSTOR が 3 つのリソースレプリカを配置することを確実にします。これにより、ローカルの高可用性のために 1 つの site に 2 つのリソースがまとめて配置され、災害復旧の目的で 3 つ目のレプリカがオフサイトに配置されます。

--x-replicas-on-different と --place-count の数値の組み合わせは、他にも可能です。しかし、適切な選択を行う必要があります。例えば、前述の LINSTOR クラスターの例で myres リソースを作成する際に、--x-replicas-on-different site 1 と --place-count 3 を指定した場合、LINSTOR はリソースの作成を拒否し、”Not enough available nodes” というエラーメッセージを表示します。

--x-replicas-on-different $X 1 を指定した場合の配置結果は、--replicas-on-different $X を指定した場合とまったく同じになります。

|

ここでのノードラベリング(補助プロパティの使用による)と、補助プロパティの値が異なるノードに対してLINSTORが最大1つのリソースレプリカを配置するという制約に加え、3つのリソースレプリカを配置するように求めていることは、LINSTORに対して不可能なことを要求していることを意味します。

実装上、--x-replicas-on-different コマンドは、補助プロパティが設定されていない LINSTOR オブジェクトを「異なる」とみなすことに注意してください。これは --replicas-on-different オプションの動作についても同様です。例えば、次のような LINSTOR クラスターを考えてみましょう。

╭──────────────────────────────────────────────────────────────────╮ ┊ Node ┊ NodeType ┊ Addresses ┊ AuxProps ┊ State ┊ ╞══════════════════════════════════════════════════════════════════╡ ┊ linstor-ctrl-0 ┊ CONTROLLER ┊ [...] ┊ ┊ Online ┊ ┊ linstor-sat-0 ┊ SATELLITE ┊ [...] ┊ Aux/site=dc1 ┊ Online ┊ ┊ linstor-sat-1 ┊ SATELLITE ┊ [...] ┊ Aux/site=dc1 ┊ Online ┊ ┊ linstor-sat-2 ┊ SATELLITE ┊ [...] ┊ Aux/site=dc2 ┊ Online ┊ ┊ linstor-sat-3 ┊ SATELLITE ┊ [...] ┊ Aux/site=dc2 ┊ Online ┊ ┊ linstor-sat-4 ┊ SATELLITE ┊ [...] ┊ Aux/site=dc2 ┊ Online ┊ ┊ linstor-sat-5 ┊ SATELLITE ┊ [...] ┊ ┊ Online ┊ ┊ linstor-sat-6 ┊ SATELLITE ┊ [...] ┊ ┊ Online ┊ ╰──────────────────────────────────────────────────────────────────╯

先ほど言及した「impossible」コマンドを使用してリソース myres を作成しようとすると、今回はそのコマンドが成功します。

linstor resource-group spawn \ --x-replicas-on-different site 1 \ --place-count 3 \ myrg myres 200G

指定された制約を満たすリソース配置の成功例は、以下の通りです。

╭─────────────────────────────────────────────────────────────────────────────────────╮ ┊ ResourceName ┊ Node ┊ Layers ┊ Usage ┊ Conns ┊ State ┊ CreatedOn ┊ ╞═════════════════════════════════════════════════════════════════════════════════════╡ ┊ myres ┊ linstor-sat-0 ┊ DRBD,STORAGE ┊ Unused ┊ Ok ┊ UpToDate ┊ [...] ┊ ┊ myres ┊ linstor-sat-2 ┊ DRBD,STORAGE ┊ Unused ┊ Ok ┊ UpToDate ┊ [...] ┊ ┊ myres ┊ linstor-sat-5 ┊ DRBD,STORAGE ┊ Unused ┊ Ok ┊ UpToDate ┊ [...] ┊ ╰─────────────────────────────────────────────────────────────────────────────────────╯

linstor-sat-5 ノードに site 補助プロパティが設定されていなかったため、--x-replicas-on-different 制約の観点では、そのノードは別のグループに属するものとしてカウントされました。この例のクラスターでは、site 補助プロパティに関して、dc1、dc2、および一部のノードで補助プロパティが設定されていないグループという、3つの異なるノードグループが存在します。このため、LINSTOR は --x-replicas-on-different 制約を満たしつつ、--place-count 制約も満たして3つのリソースを配置することができました。

LINSTORレイヤーまたはストレージプールプロバイダによるリソースの自動配置の制約

[Promoter] は、--layer-list または --providers 引数を指定することができます。その後に、LINSTOR がリソースを配置する際に影響を与える、LINSTOR のレイヤーやストレージプールプロバイダーのコンマで区切られた値(CSV)リストを指定します。CSV リストに指定できる可能なレイヤーやストレージプールプロバイダーは、--auto-place オプションを使用して --help オプションと共に表示することができます。レイヤーの CSV リストは、特定のリソースグループに対して自動的なリソース配置を制約し、あなたのリストに準拠したストレージを持つノードに制限します。次のコマンドを考えてみてください:

# linstor resource-group create my_luks_rg --place-count 3 --layer-list drbd,luks

このリソースグループから後で作成(spawn)するリソースは、LUKSレイヤーを基盤とするDRBDレイヤー(および暗黙的な「ストレージ」レイヤー)によって構成されるストレージプールを持つ3つのノードにデプロイされます。CSVリストで指定するレイヤーの順序は「トップダウン」であり、リストの左側にあるレイヤーがその右側にあるレイヤーの上位となります。

--providers 引数を使用すると、リソースの自動配置を、指定されたCSVリストに一致するストレージプールのみに制限できます。この引数を使用することで、デプロイされるリソースのバッキングとなるストレージプールを明示的に制御できます。例えば、クラスター内に ZFS、LVM、および LVM_THIN のストレージプールが混在する環境において、--place-count オプションを使用する際に --providers LVM,LVM_THIN 引数を使用することで、リソースが LVM または LVM_THIN のいずれかのストレージプールによってのみバッキングされるように指定できます。

The --providers argument’s CSV list does not specify an order of priority for the list elements. Rather, LINSTOR will use factors like additional placement constraints, available free space, and LINSTOR storage pool selection strategies that were previously described, when placing a resource.

|

リソースを作成する際に、それらを自動的に配置する

リソースを作成する標準的な方法は、リソースグループを使用してテンプレートを作成し、そこからリソースを生成することですが、 resource create コマンドを使用して直接リソースを作成することもできます。このコマンドを使用すると、LINSTOR がストレージクラスター内でリソースを配置する方法に影響を与える引数を指定することも可能です。

resource create コマンドを使用する際に指定できる引数は、resource-group create コマンドに影響を与える引数と同じですが、配置回数引数を除きます。resource create コマンドで --auto-place <replica_count> 引数を指定するのは、resource-group create コマンドで --place-count <replica_count> 引数を指定するのと同じです。

既存のリソースデプロイメントを拡張するためのAuto-placeの使用

プロモーターの名前以外に、リソースグループとリソース 作成 コマンドの配置数引数の間にもう1つ違いがあります。 リソースの作成 コマンドでは、既存のリソース展開を拡張したい場合に --auto-place 引数と一緒に値 +1 も指定できます。

この値を使用することで、LINSTORは、リソースが作成されたリソースグループに設定されている --place-count が何であるかに関係なく、追加のレプリカを作成します。

例えば、前の例で使用されていた testResource リソースの追加レプリカを展開するために --auto-place +1 引数を使用することができます。最初に、node-1 上の補助ノードプロパティである testProperty を 1 に設定する必要があります。そうでないと、以前に構成された --replicas-on-same 制約のため、LINSTOR はレプリカを展開することができません。簡単のため、以下のコマンドからのすべての出力を示していません。

# linstor node set-property --aux node-1 testProperty 1 # linstor resource create --auto-place +1 testResource # linstor resource list ╭─────────────────────────────────────────────────────────────────────────────────────╮ ┊ ResourceName ┊ Node ┊ Port ┊ Usage ┊ Conns ┊ State ┊ CreatedOn ┊ ╞═════════════════════════════════════════════════════════════════════════════════════╡ ┊ testResource ┊ node-0 ┊ 7000 ┊ Unused ┊ Ok ┊ UpToDate ┊ 2022-07-27 16:14:16 ┊ ┊ testResource ┊ node-1 ┊ 7000 ┊ Unused ┊ Ok ┊ UpToDate ┊ 2022-07-28 19:27:30 ┊ ┊ testResource ┊ node-2 ┊ 7000 ┊ Unused ┊ Ok ┊ UpToDate ┊ 2022-07-27 16:14:16 ┊ ╰─────────────────────────────────────────────────────────────────────────────────────╯

resource-group create --place-count コマンドに +1 という値は指定できません。これは、このコマンドがリソースをデプロイするものではなく、後でデプロイを行うためのテンプレートを作成するのみであるためです。

|

2.13. ストレージボリュームへのファイルシステムの作成

利便性を高めるため、ストレージリソースとボリュームをデプロイした後に LINSTOR サテライトノード上で mkfs コマンドを実行してファイルシステムを作成する代わりに、LINSTOR にファイルシステムを自動で作成させることができます。これを行うには、linstor resource-group set-property サブコマンドを使用して Filesystem/Type キーに ext4 または xfs のいずれかを設定してから、そのリソースグループからリソースを作成します。追加の Filesystem プロパティを設定することで、ファイルシステムの所有者やグループを指定したり、LINSTOR がバックグラウンドで実行する mkfs コマンドにファイルシステム固有の追加パラメータを渡したりすることも可能です。

例えば、nfs-rg という名前の LINSTOR リソースグループがある場合、次の一連のコマンドは、nfs-res という名前のリソースの LINSTOR ストレージボリューム上に XFS ファイルシステムを作成します。

linstor resource-group set-property nfs-rg FileSystem/Type xfs (1) linstor resource-group set-property nfs-rg FileSystem/User nobody (2) linstor resource-group set-property nfs-rg FileSystem/Group nobody (2) linstor resource-group spawn-resources nfs-rg nfs-res 100G

| 1 | ext4 または xfs のいずれかを指定できます。 |

| 2 | プロパティが設定されていない場合、デフォルトは root です。 |

resource-group list-property コマンドを入力して、リソースグループのプロパティを確認します。例:

linstor resource-group list-properties nfs-rg

出力には設定されたプロパティが表示されます:

╭─────────────────────────────────────╮ ┊ Key ┊ Value ┊ ╞═════════════════════════════════════╡ ┊ FileSystem/Group ┊ nobody ┊ ┊ FileSystem/Type ┊ xfs ┊ ┊ FileSystem/User ┊ nobody ┊ ╰─────────────────────────────────────╯

サテライトノードで`blkid`コマンドを使用することで、LINSTORがストレージボリューム上にファイルシステムを作成したことを確認できます。

[...] /dev/drbd1004: UUID="[...]" TYPE="xfs" [...]

サテライトノードのディレクトリにDRBDデバイスをマウントした後、`ls -l`コマンドを使用して所有権情報を表示できます。例:

drwxrwxrwx. 2 nobody nobody 6 Sep 26 18:56 data

2.13.1. リモートを作成する際にLINSTORのパスフレーズを指定します。

FileSystem/Type、FileSystem/User、および FileSystem/Group キーのほかに、FileSystem/MkfsParams を使用して、LINSTOR がストレージボリューム上にファイルシステムを作成する際に mkfs コマンドへ渡す追加のパラメータを指定できます。たとえば、LINSTOR が作成するファイルシステムにラベルを付与することが可能です。

linstor resource-group set-property nfs-rg FileSystem/MkfsParams '-L nfs-data'

その他の指定可能なパラメータについては、`mkfs.ext4`および`mkfs.xfs`のマニュアルページを参照してください。

2.14. リソース、リソース定義、およびリソース グループの削除

削除したい LINSTOR オブジェクトの後に delete コマンドを使用して、LINSTOR リソース、リソース定義、およびリソース グループを削除できます。どのオブジェクトを削除するかによって、LINSTOR クラスターおよびその他の関連する LINSTOR オブジェクトに異なる影響があります。

2.14.1. リソース定義の削除

次のコマンドを使用してリソース定義を削除できます:

# linstor resource-definition delete <resource_definition_name>

This will remove the named resource definition from the entire LINSTOR cluster. The resource is removed from all nodes and the resource entry is marked for removal from the LINSTOR database tables. After LINSTOR has removed the resource from all the nodes, the resource entry is removed from the LINSTOR database tables.

| リソース定義に既存のスナップショットがある場合、それらのスナップショットを削除するまで、そのリソース定義を削除することはできません。本ガイドの スナップショットの削除 セクションを参照してください。 |

2.14.2. リソースの削除

次のコマンドを使用してリソースを削除できます:

# linstor resource delete <node_name> <resource_name>

Unlike deleting a resource definition, this command will only delete a LINSTOR resource from the node (or nodes) that you specify. The resource is removed from the node and the resource entry is marked for removal from the LINSTOR database tables. After LINSTOR has removed the resource from the node, the resource entry is removed from the LINSTOR database tables.

LINSTORリソースを削除することは、単にリソースを削除する以上の影響をクラスターに及ぼす可能性があります。例えば、リソースがDRBDレイヤーで構成されている場合、3ノードクラスターの1つのノードからリソースを削除すると、そのリソースに設定されていた特定のクォーラム関連のDRBDオプションも(もし存在していれば)削除される可能性があります。3ノードクラスターのノードからこのようなリソースを削除した後、リソースは2つのノードにしかデプロイされていない状態になるため、もはやクォーラムを維持できなくなります。

特定のノードからリソースを削除するために linstor resource delete コマンドを実行した後、次のような情報メッセージが表示されることがあります。

INFO:

Resource-definition property 'DrbdOptions/Resource/quorum' was removed as there are not enough resources for quorum

INFO:

Resource-definition property 'DrbdOptions/Resource/on-no-quorum' was removed as there are not enough resources for quorum

リソース定義の削除とは異なり、リソースのストレージプールのスナップショットが存在している状態でも、リソースを削除することができます。リソースのストレージプールにある既存のスナップショットはすべてそのまま保持されます。

2.14.3. リソースグループの削除

次のコマンドを使用して、リソースグループを削除できます。

# linstor resource-group delete <resource_group_name>

ご想像の通り、このコマンドは指定されたリソースグループを削除します。リソースグループを削除できるのは、関連付けられたリソース定義が存在しない場合のみです。そうでない場合、LINSTORは次のようなエラーメッセージを表示します。

ERROR:

Description:

Cannot delete resource group 'my_rg' because it has existing resource definitions.

リソースグループを削除できるようにこのエラーを解消するには、関連するリソース定義を削除するか、リソース定義を別の(既存の)リソースグループに移動してください。

# linstor resource-definition modify <resource_definition_name> \ --resource-group <another_resource_group_name>

以下のコマンドを入力することで、どのリソース定義がリソースグループに関連付けられているかを確認できます。

# linstor resource-definition list

2.15. データベースのバックアップと復元

バージョン1.24.0以降、LINSTORにはLINSTORデータベースをエクスポートおよびインポートするためのツールがあります。

このツールには、/usr/share/linstor-server/bin/linstor-database という実行可能ファイルがあります。この実行可能ファイルには、export-db と import-db という2つのサブコマンドがあります。両方のサブコマンドは、linstor.toml 構成ファイルが含まれているディレクトリを指定するために使用できるオプショナルな --config-directory 引数を受け入れます。

| データベースバックアップの整合性を確保するため、LINSTORデータベースのバックアップを作成する前に、以下のコマンドに示すようにコントローラサービスを停止してコントローラをオフラインにしてください。 |

2.15.1. データベースのバックアップ

LINSTORデータベースをホームディレクトリに db_export という新しいファイルにバックアップするために、次のコマンドを入力してください:

# systemctl stop linstor-controller # /usr/share/linstor-server/bin/linstor-database export-db ~/db_export # systemctl start linstor-controller

必要に応じて、linstor-database ユーティリティで --config-directory 引数を使用して LINSTOR 設定ディレクトリを指定できます。この引数を省略した場合、ユーティリティはデフォルトで /etc/linstor ディレクトリを使用します。

|

データベースのバックアップが完了したら、バックアップファイルを安全な場所にコピーしてください。

# cp ~/db_export <somewhere safe>

作成されたデータベースバックアップはプレーンなJSONドキュメントであり、実際のデータだけでなく、バックアップの作成日時や作成元のデータベース、その他の情報などのメタデータも含まれています。

2.15.2. バックアップからデータベースを復元する

以前に作成したバックアップからデータベースを復元する手順は、 前のセクション で説明した export-db と似ています。

たとえば、以前に作成したバックアップを db_export ファイルから復元する場合は、次のコマンドを入力してください。

# systemctl stop linstor-controller # /usr/share/linstor-server/bin/linstor-database import-db ~/db_export # systemctl start linstor-controller

以前のバックアップからデータベースをインポートする際には、現在インストールされているLINSTORのバージョンが、バックアップを作成したバージョンと同じかそれ以上である必要があります。もし現在の LINSTOR バージョンが、データベースバックアップを作成したバージョンよりも新しい場合、バックアップをインポートする際にデータはエクスポート時に使用されたバージョンのデータベーススキーマと同じで復元されます。その後、コントローラが再起動すると、データベースが古いスキーマであることを検知し、自動的にデータを現在のバージョンのスキーマに移行します。

2.15.3. データベースの変換

エクスポートされたデータベースファイルにはいくつかのメタデータが含まれているため、エクスポートされたデータベースファイルは、エクスポート元とは異なるデータベースタイプにインポートすることができます。

これにより、例えば etcd 構成から SQL ベースの構成へ変換することが可能になります。データベース形式を変換するための特別なコマンドは必要ありません。--config-directory 引数を使用して適切な linstor.toml 設定ファイルを指定するか、デフォルトの /etc/linstor/linstor.toml を更新して、インポート前に使用したいデータベースタイプを指定するだけです。データベースタイプの指定方法についての詳細は、LINSTOR ユーザガイド を参照してください。バックアップがどのデータベースタイプで作成されたかに関わらず、データは linstor.toml 設定ファイルで指定されたデータベースタイプとしてインポートされます。

3. LINSTOR 応用タスク

3.1. 高可用性 LINSTOR クラスターの作成

デフォルトでは、LINSTORクラスタはひとつだけアクティブなLINSTORコントローラノードから構成されます。LINSTORを高可用性にするためには、コントローラデータベースの複製ストレージを提供し、複数のLINSTORコントローラノードを設けて、常にひとつだけがアクティブとなるようにします。さらに、サービスマネージャ(ここではDRBD Reactor)が、高可用性ストレージのマウントやアンマウント、ノード上でのLINSTORコントローラサービスの起動と停止を管理します。

3.1.1. 高可用性を持つLINSTORデータベースストレージの設定

高可用性ストレージを構成するためには、LINSTOR自体を使用することができます。ストレージをLINSTORの管理下に置く利点の1つは、高可用性ストレージを新しいクラスターノードに簡単に拡張できることです。

HA LINSTORデータベースストレージリソースのためのリソースグループを作成する

まず、後でLINSTORデータベースをバックアップするリソースをスポーンするために使用する、linstor-db-grp という名前のリソースグループを作成してください。環境内の既存のストレージプール名に合わせて、ストレージプール名を適応させる必要があります。

# linstor resource-group create \ --storage-pool my-thin-pool \ --place-count 3 \ --diskless-on-remaining true \ linstor-db-grp

次に、リソースグループに必要な DRBD オプションを設定します。リソースグループから起動したリソースは、これらのオプションを継承します。

# linstor resource-group drbd-options \ --auto-promote=no \ --quorum=majority \ --on-suspended-primary-outdated=force-secondary \ --on-no-quorum=io-error \ --on-no-data-accessible=io-error \ linstor-db-grp

重要:クラスタが自動クォーラムを取得し、io-error ポリシーを使用していること(セクション 自動クォーラムポリシー 参照)、および auto-promote が無効になっていることが重要です。

|

3.1.2. LINSTORデータベースをHAストレージに移行する

linstor_db リソースを作成した後は、LINSTOR データベースを新しいストレージに移動し、systemd マウントサービスを作成することができます。まず、現在のコントローラーサービスを停止して無効にし、後で DRBD Reactor によって管理されるためです。

# systemctl disable --now linstor-controller

次に、systemdのマウントサービスを作成してください。

# cat << EOF > /etc/systemd/system/var-lib-linstor.mount

[Unit]

Description=Filesystem for the LINSTOR controller

[Mount]

# you can use the minor like /dev/drbdX or the udev symlink

What=/dev/drbd/by-res/linstor_db/0

Where=/var/lib/linstor

EOF

# mv /var/lib/linstor{,.orig}

# mkdir /var/lib/linstor

# chattr +i /var/lib/linstor # only if on LINSTOR >= 1.14.0

# drbdadm primary linstor_db

# mkfs.ext4 -b 4096 /dev/drbd/by-res/linstor_db/0

# systemctl start var-lib-linstor.mount

# cp -r /var/lib/linstor.orig/* /var/lib/linstor

# systemctl start linstor-controller

/etc/systemd/system/var-lib-linstor.mount マウントファイルを、LINSTORコントローラーサービス(スタンバイコントローラーノード)を実行する可能性があるすべてのクラスターノードにコピーしてください。再度強調しますが、これらのサービスのいずれも systemctl enable しないでください。なぜなら、DRBD Reactor がそれらを管理するからです。

3.1.3. 複数のLINSTORコントローラー

次のステップは、linstor_db DRBDリソースにアクセス権があるすべてのノードに LINSTOR コントローラをインストールすることです(DRBDボリュームをマウントする必要があるため、および潜在的な LINSTOR コントローラにしたいため)。 コントローラが drbd-reactor によって管理されていることが重要なので、すべてのノードで linstor-controller.service が無効になっていることを確認してください! 確実であるために、すべてのクラスタノードで systemctl disable linstor-controller を実行し、前のステップから現在実行されているノードを除くすべてのノードで systemctl stop linstor-controller を実行してください。また、LINSTORのバージョンが1.14.0以上である場合、潜在的なコントローラノードすべてにて chattr +i /var/lib/linstor が設定されているかどうかも確認してください。

3.1.4. サービスの管理

マウントサービスや linstor-controller サービスを開始または停止するには、DRBD Reactor を使用してください。このコンポーネントを、LINSTORコントローラになりうるすべてのノードにインストールし、それらの /etc/drbd-reactor.d/linstor_db.toml 構成ファイルを編集します。有効な昇格プラグインのセクションが次のように含まれている必要があります:

[[promoter]] [promoter.resources.linstor_db] start = ["var-lib-linstor.mount", "linstor-controller.service"]

要件に応じて、on-stop-failure アクションの設定や stop-services-on-exit の設定が必要になる場合もあります。

その後 drbd-reactor を再起動し、構成したすべてのノードで有効にします。

# systemctl restart drbd-reactor # systemctl enable drbd-reactor

systemctl status drbd-reactor を実行して、ログに drbd-reactor サービスからの警告が出ていないことを確認します。すでにアクティブな LINSTOR コントローラが存在するため、現状がそのまま維持されます。drbd-reactorctl status linstor_db を実行して、linstor_db ターゲットユニットのヘルス状態を確認してください。

最後ではありますが重要なステップは、起動時にLINSTORコントローラーDBのリソースファイルを削除(および再生成)しないようにLINSTORサテライトサービスを設定することです。サービスファイルを直接編集せず、systemctl edit を使用してください。LINSTORコントローラーになる可能性があり、かつLINSTORサテライトでもあるすべてのノードで、このサービスファイルを編集してください。

# systemctl edit linstor-satellite [Service] Environment=LS_KEEP_RES=linstor_db

この変更を行った後、全てのサテライトノードで systemctl restart linstor-satellite を実行する必要があります。

| 注意: LINSTOR クライアントの使用 というセクションで説明されているように、複数のコントローラに使用するように LINSTOR クライアントを構成することを確認し、また、Proxmox プラグインなどの統合プラグインも複数の LINSTOR コントローラに対応するように構成しているか確認してください。 |

3.2. DRBDクライアント

--storage-pool オプションの代わりに --drbd-diskless オプションを使用することで、ノード上に永続的にディスクレスな DRBD デバイスを持つことができます。つまり、そのリソースはブロックデバイスとして表示され、既存のストレージデバイスがなくてもファイルシステムにマウントすることができます。リソースのデータは、同じリソースを持つ他のノードを介してネットワーク経由でアクセスされます。

# linstor resource create delta backups --drbd-diskless

注意: --diskless オプションは非推奨となりました。代わりに --drbd-diskless または --nvme-initiator コマンドを使用してください。

|

3.3. LINSTOR – DRBDコンシステンシグループ/マルチボリューム

The so called consistency group is a feature from DRBD. It is mentioned in this user guide, due to the fact that one of the main functions of LINSTOR is to manage storage clusters with DRBD. Multiple volumes in one resource are a consistency group.

これは、1つのリソースで異なるボリュームに対する変更が他のサテライトでも同じ順番で複製されることを意味します。

したがって、リソース内の異なるボリュームに相互依存データがある場合は、タイミングを気にする必要はありません。

LINSTORリソースに複数のボリュームを配備するには、同じ名前の volume-definitions を2つ作成する必要があります。

# linstor volume-definition create backups 500G # linstor volume-definition create backups 100G

3.4. 1つのリソースのボリュームを異なるストレージプールに配置

リソースをノードに配備する前のボリューム定義で StorPoolName プロパティを使うことで、1つのリソースから異なるストレージプールへのボリュームを作成できます。

# linstor resource-definition create backups # linstor volume-definition create backups 500G # linstor volume-definition create backups 100G # linstor volume-definition set-property backups 0 StorPoolName pool_hdd # linstor volume-definition set-property backups 1 StorPoolName pool_ssd # linstor resource create alpha backups # linstor resource create bravo backups # linstor resource create charlie backups

注意: volume-definition create コマンドが --vlmnr オプションを指定せずに使用されたため、LINSTORは0から始まるボリューム番号を割り当てました。次の2行の0と1は、これらの自動的に割り当てられたボリューム番号を指します。

|

ここでは、’resource create’ コマンドには --storage-pool オプションが不要です。この場合、LINSTORは ‘fallback’ ストレージプールを使用します。そのストレージプールを見つけるために、LINSTORは以下の順序で以下のオブジェクトのプロパティをクエリします:

-

ボリューム定義

-

リソース

-

リソース定義

-

ノード

もし、それらのオブジェクトのどれもが StorPoolName プロパティを含んでいない場合、コントローラーはストレージプールとしてハードコードされた ‘DfltStorPool’ 文字列にフォールバックします。

これはつまり、リソースを展開する前にストレージプールを定義するのを忘れた場合、’DfltStorPool’ という名前のストレージプールが見つからないというエラーメッセージが LINSTOR から表示されることを意味します。

3.5. DRBDを使わないLINSTOR

LINSTORはDRBDを使わずとも使用できます。 DRBDがなくても、LINSTORはLVMおよびZFS 下位ストレージプールからボリュームをプロビジョニングし、LINSTORクラスタ内の個々のノードにそれらのボリュームを作成できます。

現在LINSTORはLVMとZFSボリュームの作成をサポートしており、LUKS、DRBD、または NVMe-oF/NVMe-TCP の組み合わせをそれらのボリュームの上に重ねることもできます。

例えば、LINSTORクラスターで定義されたThin LVMをバックエンドストレージプールとして持っていると仮定します。このストレージプールの名前は thin-lvm です。

# linstor storage-pool list ╭──────────────────────────────────────────────────────────────╮ ┊ StoragePool ┊ Node ┊ Driver ┊ PoolName ┊ ... ┊ ╞══════════════════════════════════════════════════════════════╡ ┊ thin-lvm ┊ linstor-a ┊ LVM_THIN ┊ drbdpool/thinpool ┊ ... ┊ ┊ thin-lvm ┊ linstor-b ┊ LVM_THIN ┊ drbdpool/thinpool ┊ ... ┊ ┊ thin-lvm ┊ linstor-c ┊ LVM_THIN ┊ drbdpool/thinpool ┊ ... ┊ ┊ thin-lvm ┊ linstor-d ┊ LVM_THIN ┊ drbdpool/thinpool ┊ ... ┊ ╰──────────────────────────────────────────────────────────────╯

次のコマンドを使用して、linstor-d 上に100GiBのThin LVMを作成するには、LINSTORを使用できます:

# linstor resource-definition create rsc-1

# linstor volume-definition create rsc-1 100GiB

# linstor resource create --layer-list storage \

--storage-pool thin-lvm linstor-d rsc-1

その後、linstor-d に新しい Thin LVM が作成されていることが確認できるはずです。LINSTOR からデバイスパスを抽出するには、--machine-readable フラグを設定して linstor リソースをリストアップしてください。

# linstor --machine-readable resource list | grep device_path

"device_path": "/dev/drbdpool/rsc-1_00000",

もし、このボリューム上にDRBDをレイヤー化したい場合、ZFSやLVMでバックアップされたボリュームに対してLINSTORでのデフォルト --layer-list オプションはどうなるか疑問に思うことでしょう。その場合、以下のようなリソース作成パターンを使用します:

# linstor resource-definition create rsc-1

# linstor volume-definition create rsc-1 100GiB

# linstor resource create --layer-list drbd,storage \

--storage-pool thin-lvm linstor-d rsc-1

その後、linstor-d 上の新しい Thin LVM が DRBD ボリュームをバックアップしていることがわかります。

# linstor --machine-readable resource list | grep -e device_path -e backing_disk

"device_path": "/dev/drbd1000",

"backing_disk": "/dev/drbdpool/rsc-1_00000",

次の表は、どのレイヤーの後にどの子レイヤーが続くかを示しています。

| Layer | Child layer |

|---|---|

DRBD |

CACHE, WRITECACHE, NVME, LUKS, STORAGE |

CACHE |

WRITECACHE, NVME, LUKS, STORAGE |

WRITECACHE |

CACHE, NVME, LUKS, STORAGE |

NVME |

CACHE, WRITECACHE, LUKS, STORAGE |

LUKS |

STORAGE |

STORAGE |

– |

| 1つの層は、層リストに1度しか出現できません。 |

luks レイヤの必要条件についての情報はこのユーザガイドの暗号化ボリュームの節を参照ください。

|

3.5.1. NVMe-oF/NVMe-TCP LINSTOR レイヤ

NVMe-oF/NVMe-TCPによって、LINSTORはディスクレスリソースを NVMe ファブリックを介してデータが保存されている同じリソースを持つノードに接続することが可能となります。これにより、リソースをローカルストレージを使用せずにマウントすることができ、データにはネットワーク経由でアクセスします。LINSTORはこの場合にDRBDを使用しておらず、そのためLINSTORによってプロビジョニングされたNVMeリソースはレプリケーションされません。データは1つのノードに保存されます。

NVMe-oFはRDMAに対応したネットワークでのみ動作し、NVMe-TCPはIPトラフィックを運ぶことができるネットワークで動作します。ネットワークアダプタの機能を確認するためには、lshw や ethtool などのツールを使用できます。

|

LINSTORとNVMe-oF/NVMe-TCPを使用するには、サテライトとして機能し、リソースにNVMe-oF/NVMe-TCPを使用する各ノードに nvme-cli パッケージをインストールする必要があります。例えば、DEBベースのシステムでは、次のコマンドを入力してパッケージをインストール します:

# apt install nvme-cli

DEBベースのシステムでない場合は、お使いのオペレーティングシステムに適したパッケージをインストールするためのコマンドを使用してください。例えば、SLESでは zypper 、RPMベースのシステムでは dnf を使用します。

|

NVMe-oF/NVMe-TCP を使用するリソースを作成するには、resource-definition を作成するときに追加のパラメータを指定する必要があります。

# linstor resource-definition create nvmedata -l nvme,storage

DRBDが使用されている場合、デフォルトでは -l(layer-stack) パラメータは drbd,storage に設定されています。 NVMeもDRBDも使用せずにLINSTORリソースを作成したい場合は、 -l パラメータを storage だけに設定する必要があります。

|

デフォルトの NVMe-oF の代わりに NVMe-TCP を使用するには、次のプロパティを設定する必要があります。

# linstor resource-definition set-property nvmedata NVMe/TRType tcp

プロパティ NVMe/TRType は、リソースグループまたはコントローラーレベルで設定することもできます。

次に、リソースのためのボリューム定義を作成してください。

# linstor volume-definition create nvmedata 500G

ノード上にリソースを作成する前に、データがローカルに格納される場所と、どのノードがネットワーク経由でそれにアクセスするかを確認します。

まず、データを保存するノード上にリソースを作成してください。

# linstor resource create alpha nvmedata --storage-pool pool_ssd

ネットワーク上でリソースデータにアクセスするノードでは、リソースをディスクレスとして定義する必要があります。

# linstor resource create beta nvmedata --nvme-initiator

これでリソース nvmedata をノードの1つにマウントできます。

| ノードに複数のNICがある場合は、NVMe-of/NVME-TCP に対してそれらの間の経路を強制する必要があります。そうしないと、複数のNICで問題が発生する可能性があります。 |

3.5.2. 書き込みキャッシュレイヤー

DM-Writecache デバイスは、ストレージデバイスとキャッシュデバイスの2つのデバイスで構成されます。 LINSTORはそのような書き込みキャッシュデバイスをセットアップできますが、ストレージプールやキャッシュデバイスのサイズなどの追加情報が必要です。

# linstor storage-pool create lvm node1 lvmpool drbdpool # linstor storage-pool create lvm node1 pmempool pmempool # linstor resource-definition create r1 # linstor volume-definition create r1 100G # linstor volume-definition set-property r1 0 Writecache/PoolName pmempool # linstor volume-definition set-property r1 0 Writecache/Size 1% # linstor resource create node1 r1 --storage-pool lvmpool --layer-list WRITECACHE,STORAGE

例で設定されている2つのプロパティは必須ですが、WRITECACHE を持つすべてのリソースに対してデフォルトとして機能するコントローラーレベルでも設定することができます。ただし、Writecache/PoolName は対応するノードを指します。ノードに pmempool というストレージプールがない場合、エラーメッセージが表示されます。

DM-Writecache による必須の4つのパラメータは、プロパティを介して設定されるか、LINSTORによって解決されます。前述のリンクに記載されているオプションのプロパティは、同様にプロパティを介して設定できます。linstor controller set-property --help を参照して、Writecache/* プロパティキーのリストを確認してください。

外部メタデータを使用するようにDRBDを設定しているときに --layer-list DRBD、WRITECACHE、STORAGE を使用すると、外部メタデータを保持するデバイスではなく、下位デバイスのみが書き込みキャッシュを使用します。

3.5.3. キャッシュレイヤー

LINSTOR は DM-Cache デバイスをセットアップすることもできます。これは前のセクションの DM-Writecache によく似ています。主な違いは、キャッシュデバイスが 3 つのデバイスで構成されていることです。1 つのストレージデバイス、1 つのキャッシュデバイス、1 つのメタデバイスです。LINSTOR プロパティは書き込みキャッシュのプロパティと非常に似ていますが、Cache 名前空間にあります。

# linstor storage-pool create lvm node1 lvmpool drbdpool # linstor storage-pool create lvm node1 pmempool pmempool # linstor resource-definition create r1 # linstor volume-definition create r1 100G # linstor volume-definition set-property r1 0 Cache/CachePool pmempool # linstor volume-definition set-property r1 0 Cache/Cachesize 1% # linstor resource create node1 r1 --storage-pool lvmpool --layer-list CACHE,STORAGE

Writecache レイヤーを構成する Writecache/PoolName と異なり、Cache レイヤーの唯一の必須プロパティは Cache/CachePool です。これは、Cache レイヤーの Cache/MetaPool は個別に設定するか、またはデフォルトで Cache/CachePool の値を設定するからです。

|

linstor controller set-property --help を参照し、Cache/* プロパティのキーと、省略されたプロパティのデフォルト値の一覧を確認してください。

外部メタデータを使用するようにDRBDを設定しているときに --layer-list DRBD,CACHE,STORAGE を使用すると、外部メタデータを保持するデバイスではなく、下位デバイスのみがキャッシュを使用します。

3.5.4. ストレージレイヤー

ストレージレイヤーは、LVM、ZFS などのよく知られたボリュームマネージャーからの新しいデバイスを提供します。リソースがディスクレスである必要がある場合(そのタイプは専用の「ディスクレス」プロバイダータイプ)でも、すべてのレイヤーの組み合わせはストレージレイヤーに基づく必要があります。

プロバイダとその特性のリストについては、 ストレージプロバイダ を参照してください。

一部のストレージプロバイダー用に、LINSTOR には特別なプロパティがあります。

StorDriver/WaitTimeoutAfterCreate-

LINSTORは、デバイスが作成された後に登場することを期待しています(たとえば、

lvcreateやzfs createの呼び出しの後など)。LINSTORは、デフォルトでデバイスが登場するのを500ミリ秒待ちます。これらの500ミリ秒は、このプロパティによって上書きすることができます。 StorDriver/dm_stats-

trueに設定されている場合、LINSTORはボリュームの作成後にdmstats create $deviceを呼び出し、削除後にはdmstats delete $device --allregionsを呼び出します。現在は、LVMおよびLVM_THINストレージプロバイダーのみが有効です。

3.6. ストレージプロバイダー

LINSTOR にはいくつかのストレージプロバイダーがあります。最も使用されているのは LVM と ZFS です。しかし、これら 2 つのプロバイダーについても、シンプロビジョニングされた異なるサブタイプがすでに存在します。

-

Diskless: このプロバイダータイプには、 Managing Network Interface Cards で説明されているように

PrefNicなどの LINSTOR プロパティで構成できるストレージプールがほとんどの場合必要です。 -

LVM / LVM-Thin: 管理者は、対応するストレージタイプを使用するために LVM ボリュームグループまたは thin-pool (“LV/thinpool” の形式で) を指定することが期待されています。これらのドライバは、以下のプロパティを微調整するためのサポートを提供しています。

-

StorDriver/LvcreateOptions: このプロパティの値は、LINSTOR が実行するすべてのlvcreate …呼び出しの LINSTOR 実行に追加されます。

-

-

ZFS / ZFS-Thin: 管理者は、LINSTORが使用すべきZPoolを指定することが期待されています。これらのドライバは、微調整用に以下のプロパティをサポートしています。

-

StorDriver/ZfscreateOptions: このプロパティの値は、LINSTOR が実行するすべてのzfs create …呼び出しの LINSTOR 実行に追加されます。

-

-

File / FileThin: 主にデモンストレーションや実験に使用されます。LINSTORは指定されたディレクトリにファイルを確保し、そのファイルの上に loopデバイス を構成します。

-

SPDK: 管理者は、LINSTORが使用するべき論理ボリュームストアを指定する必要があります。このストレージプロバイダの使用は、 NVME Layer の使用を意味します。

-

Remote-SPDK: この特別なストレージプロバイダーは現在「特別なサテライト」上で実行する必要があります。詳細については Remote SPDK Provider を参照してください。

-

3.6.1. 異なるストレージプロバイダーのストレージプールを混在させる

While LINSTOR resources are most often backed by storage pools that consist of only one storage provider type, it is possible to use storage pools of different types to back a LINSTOR resource. This is called mixing storage pools. This might be useful when migrating storage resources but could also have some consequences. Read this section carefully before configuring mixed storage pools.

ストレージプールを混在させるための前提条件:

ストレージプールを混在させるには、次の前提条件があります。

-

LINSTOR version 1.27.0 or later

-

LINSTOR satellite nodes need to have DRBD version 9.2.7 (or 9.1.18 if on the 9.1 branch) or later

-

LINSTORプロパティ

AllowMixingStoragePoolDriverが、LINSTORコントローラ、リソースグループ、またはリソース定義のLINSTORオブジェクトレベルでtrueに設定されています。

LINSTORのオブジェクト階層のため、LINSTORコントローラオブジェクトに AllowMixingStoragePoolDriver プロパティを設定すると(linstor controller set-property AllowMixingStoragePoolDriver true)、そのプロパティは、 false に設定されているリソースグループやリソース定義を除くすべてのLINSTORリソースに適用されます。

|

ストレージプールが混在していると見なされる場合:

次のいずれかの基準を満たす場合、LINSTOR はセットアップをストレージプールの混合として考慮します。

-

ストレージプールには異なる領域サイズがあります。例えば、デフォルトではLVMの領域サイズは4MiBですが、ZFS(バージョン2.2.0以降)の領域サイズは16KiBです。

-

The storage pools have different DRBD initial synchronization strategies, for example, a full initial synchronization, or a prepartory partial synchronization. Using

zfsandzfsthinstorage provider-backed storage pools together would not meet this criterion because they each use a preparatory partial DRBD synchronization strategy.

ストレージプールの混合の結果:

複数のストレージプールを組み合わせてバックアップするLINSTORリソースを作成する際は、LINSTORの機能に影響を及ぼす可能性があります。例えば、lvm と `lvmthin `ストレージプールを混在させると、そのような組み合わせでバックアップされた任意のリソースは、厚いリソースと見なされます。これが、LINSTORがストレージプールの混在を許可する仕組みです。

これには、 zfs と zfsthin ストレージプールを組み合わせる例が挙げられます。LINSTORは、 zfs と zfsthin ストレージプールを組み合わせてもストレージプールを混在させたとは考えません。なぜなら、これらの2つのストレージプールは同じ拡張サイズを持ち、両方のストレージプールが同じDRBD初期同期戦略であるday 0ベースの部分同期を使用するためです。

3.6.2. リモート SPDK プロバイダー

リモート SPDK のストレージプールは、特別なサテライトでのみ作成できます。最初に次のコマンドを使用して新しいサテライトを開始する必要があります。

$ linstor node create-remote-spdk-target nodeName 192.168.1.110

これにより、コントローラーと同じマシンで実行されている新しいサテライトインスタンスが開始されます。この特別なサテライトは、リモート SPDK プロキシに対してすべての REST ベースの RPC 通信を実行します。LINSTOR コマンドのヘルプメッセージが示すように、管理者はこの特別なサテライトを作成するときに設定を追加したい場合があります。

$ linstor node create-remote-spdk-target -h

usage: linstor node create-remote-spdk-target [-h] [--api-port API_PORT]

[--api-user API_USER]

[--api-user-env API_USER_ENV]

[--api-pw [API_PW]]

[--api-pw-env API_PW_ENV]

node_name api_host

--api-* とそれに対応する --api-\*-env バージョンの違いは、-env で終わるバージョンは、実際に使用する値が含まれている環境変数を探しますが、--api-\* バージョンは直接、LINSTOR プロパティに保存されている値を取得します。管理者は、--api-pw を平文で保存したくないかもしれません。これは、linstor node list-property <nodeName> のようなコマンドを使用すると明らかに見ることができます。

特別なサテライトが稼働し始めたら、実際のストレージプールを作成できます。

$ linstor storage-pool create remotespdk -h

usage: linstor storage-pool create remotespdk [-h]

[--shared-space SHARED_SPACE]

[--external-locking]

node_name name driver_pool_name

node_name は自明ですが、name は LINSTOR ストレージプールの名前であり、 driver_pool_name は SPDK 論理ボリュームストアを指定します。

remotespdk ストレージプールが作成されたら、残りの手続きはNVMeの使用と非常に似ています。まず、ターゲットを作成するために、単純な「diskful」リソースを作成し、 --nvme-initiator オプションを有効にした2つ目のリソースを作成します。

3.7. ネットワークインターフェイスカードの管理

LINSTORは1台のマシン内で複数のネットワークインターフェイスカード(NICs)を扱うことができます。LINSTORの用語ではこれらは「net interfaces」と呼ばれています。

| サテライト ノードが作成されると、最初のネット インターフェイスが「default」という名前で暗黙的に作成されます。サテライトノードを作成するときに、node create コマンドの –interface-name オプションを使用して別の名前を付けることができます。 |

既存のノードの場合、次のように追加のネットインターフェイスが作成されます。

# linstor node interface create node-0 nic_10G 192.168.43.231

ネットワークインターフェースはIPアドレスのみによって識別されます。その名前は任意であり、Linuxで使用されるNIC名とは関係ありません。LINSTORのネットワークインターフェース名は任意ですが、いくつかの命名制限があります。

-

3文字以上

-

最大32文字

-

英字(大文字・小文字を区別しない)またはアンダースコア(

_)で始まる必要があります。 -

使用可能な文字は、英数字、アンダースコア(

_)、およびハイフン(-)です。

ネットワークインターフェースを作成後、それをノードに割り当てることで、そのノードのDRBDトラフィックが対応するNICを経由してルーティングされるようになります。

# linstor node set-property node-0 PrefNic nic_10G

| ストレージプールに「PrefNic」プロパティを設定することもできます。ストレージプールを使用するリソースからの DRBD トラフィックは、対応する NIC 経由でルーティングされます。ただし、ここで注意が必要です。ディスクレスストレージを必要とする DRBD リソース (たとえば、DRBD クォーラムの目的でタイブレーカーの役割を果たしているディスクレス ストレージ) は、「default」ネットインターフェイスの「PrefNic」プロパティを設定するまで、デフォルトのサテライトノードのネットインターフェイスを通過します。セットアップが複雑になる可能性があります。ノードレベルで「PrefNic」プロパティを設定する方がはるかに簡単で安全です。これにより、ディスクレスストレージプールを含む、ノード上のすべてのストレージプールが優先 NIC を使用します。 |

コントローラーとサテライト間の通信専用のインターフェースを追加する必要がある場合は、前述の node interface create コマンドを使用してインターフェースを追加できます。次に、その接続設定を変更して、アクティブなコントローラー・サテライト間接続にします。例えば、すべてのノードに satconn_1G という名前のインターフェースを追加した場合、追加後に次のコマンドを入力することで、LINSTORがこのインターフェースをコントローラー・サテライト間の通信に使用するように設定できます。

# linstor node interface modify node-0 satconn_1G --active

この変更は、`linstor node interface list node-0`コマンドを使用して確認できます。コマンドの出力には、`satconn_1G`インターフェースに`StltCon`ラベルが適用されていることが表示されるはずです。

この方法では指定されたNICを介してDRBDトラフィックをルーティングしますが、例えば、LINSTORクライアントからコントローラに発行するコマンドなど、LINSTORコントローラークライアント間のトラフィックを特定のNICを介してルーティングすることは、linstor コマンドだけでは不可能です。これを実現するためには、次のいずれかの方法を取ることができます:

-

LINSTORクライアントの使用方法 に記載されている方法を使用して、LINSTORコントローラーを特定し、コントローラーへの唯一のルートを、コントローラーとクライアント間の通信に使用したいNICを通じて指定してください。

-

ip routeやiptablesなどの Linux ツールを使用して、LINSTOR クライアントコントローラートラフィックのポート番号 3370 をフィルタリングし、特定の NIC を介してルーティングする。

3.7.1. LINSTORによる複数のDRBDパスの作成

DRBDのセットアップに 複数のネットワークパスを使用するには、PrefNic プロパティは十分ではありません。代わりに、次のように linstor node interface と linstor resource-connection path コマンドを使用する必要があります。

# linstor node interface create alpha nic1 192.168.43.221 # linstor node interface create alpha nic2 192.168.44.221 # linstor node interface create bravo nic1 192.168.43.222 # linstor node interface create bravo nic2 192.168.44.222 # linstor resource-connection path create alpha bravo myResource path1 nic1 nic1 # linstor resource-connection path create alpha bravo myResource path2 nic2 nic2

例では、最初の4つのコマンドは、各ノード(alpha と bravo)にネットワークインターフェース(nic1 と nic2)を定義し、ネットワークインターフェースのIPアドレスを指定しています。最後の2つのコマンドは、LINSTORが生成するDRBDの .res ファイルにネットワークパスエントリを作成します。これが生成された .res ファイルの該当部分です。

resource myResource {

...

connection {

path {

host alpha address 192.168.43.221:7000;

host bravo address 192.168.43.222:7000;

}

path {

host alpha address 192.168.44.221:7000;

host bravo address 192.168.44.222:7000;

}

}

}

ノードインターフェースを作成する際に、LINSTORサテライトトラフィックに使用するポート番号を指定することは可能ですが、このポート番号はDRBDリソース接続パスを作成する際に無視されます。その代わり、コマンドは動的にポート番号を割り当て、ポート番号7000から増分して割り当てます。LINSTORがポートを動的に割り当てる際に使用するポート番号範囲を変更したい場合は、LINSTORコントローラーオブジェクトの TcpPortAutoRange プロパティを設定することができます。詳細については、linstor controller set-property --help を参照してください。デフォルトの範囲は7000から7999です。

|

新しい DRBD パスを追加するとデフォルトパスにどのように影響するか.